-

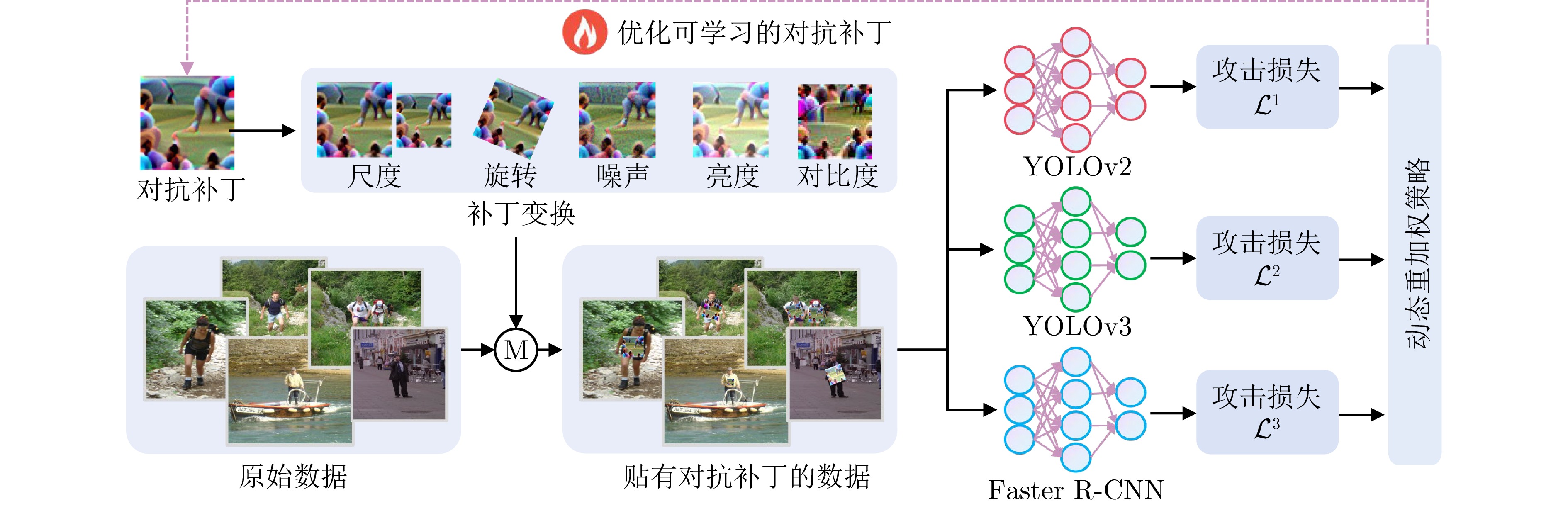

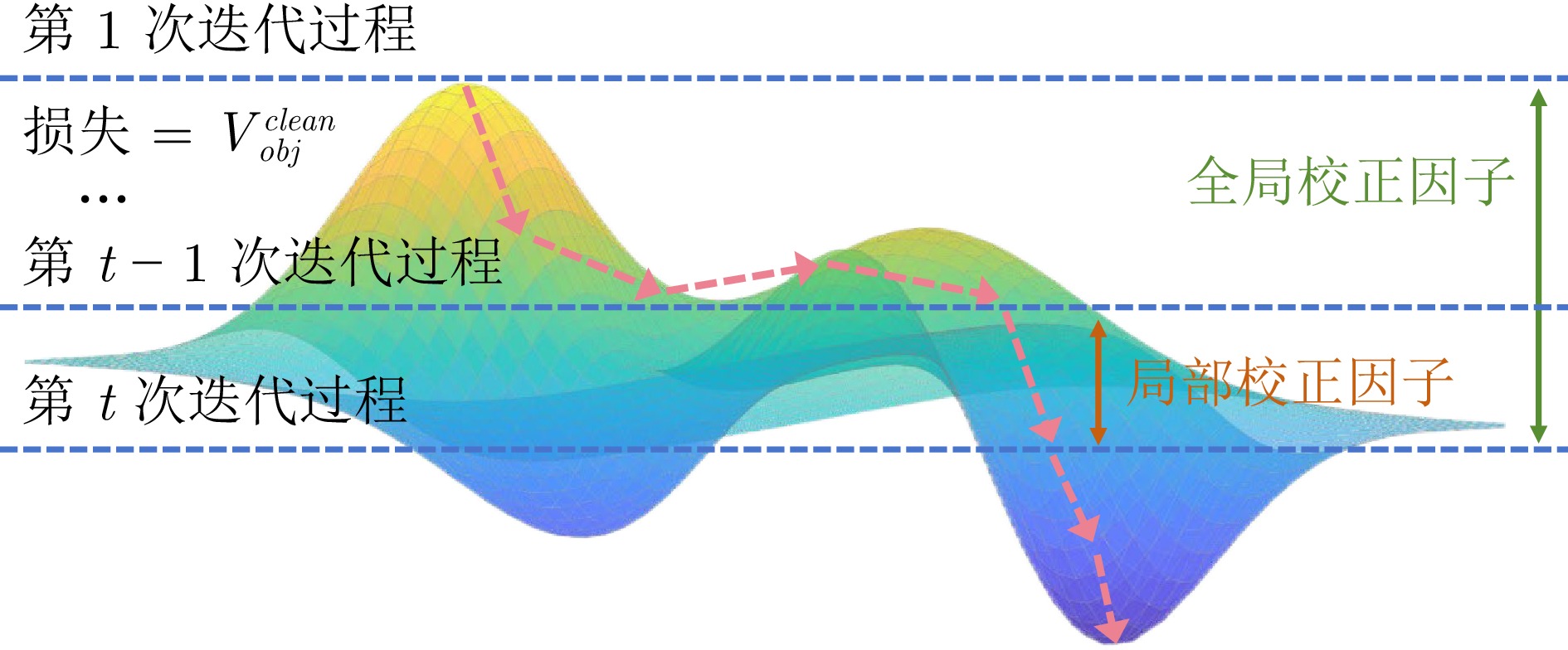

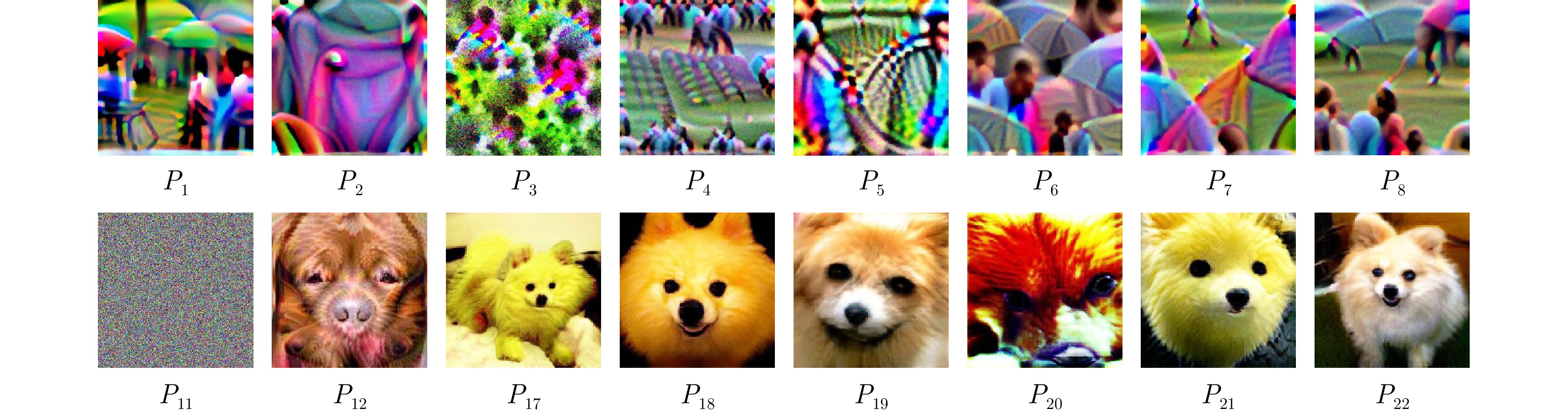

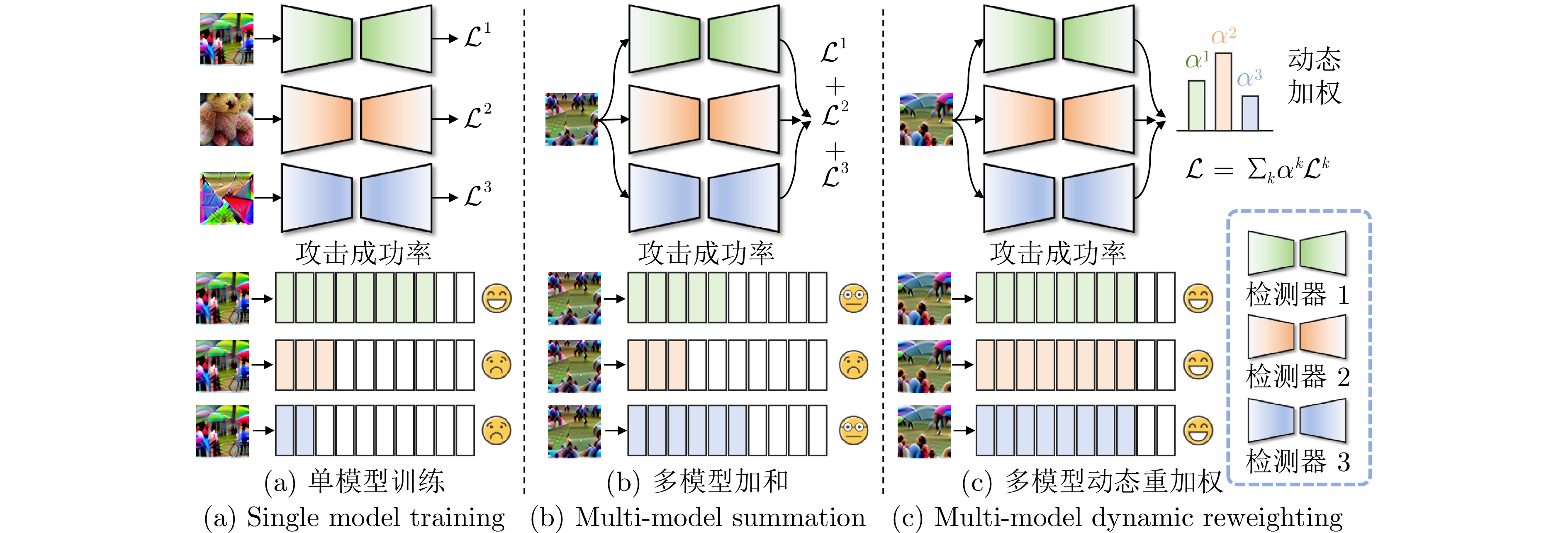

摘要: 随着目标检测模型在实际应用中的广泛部署, 其安全性问题日益成为研究热点. 对抗攻击技术通过精心设计对抗补丁, 能够有效诱导模型产生错误预测, 揭示深度神经网络在决策过程中存在的内在脆弱性. 为提升对抗补丁在不同检测器上的攻击迁移性, 现有方法大多依赖静态权重融合策略进行联合优化, 难以充分协调不同检测器在脆弱性分布及优化动态上的差异, 导致攻击效果无法在各模型间兼顾, 迁移性受到显著限制. 针对这一挑战, 提出一种基于多任务动态重加权机制的可迁移性对抗补丁生成框架. 该框架设计全局校正因子和局部校正因子, 分别从任务间整体优化进度及单任务细粒度收敛行为两个层面动态调整任务权重, 实现多模型联合优化过程中的协调与鲁棒性提升. 通过系统性的数字域与物理域实验验证, 所提方法显著增强了对抗补丁在不同目标检测器上的对抗攻击迁移性, 并且在真实物理域的部署中表现出优秀的攻击效果.Abstract: With the widespread deployment of object detection models in real-world applications, their security issues have increasingly become a research focus. Adversarial attack techniques, by carefully designing adversarial patches, can effectively induce models to produce erroneous predictions, thereby revealing the inherent vulnerabilities of deep neural networks in the decision-making process. To enhance the transferability of adversarial patches across different detectors, most existing methods rely on static weight fusion strategies for joint optimization. However, such approaches struggle to fully reconcile the discrepancies in vulnerability distributions and optimization dynamics among detectors, leading to imbalanced attack effectiveness across models and significantly limiting the transferability. To address this challenge, this paper proposes a transferable adversarial patch generation framework based on a multi-task dynamic reweighting mechanism. The framework introduces a global correction factor and a local correction factor, which dynamically adjust task weights from two perspectives: The overall optimization progress among tasks and the fine-grained convergence behavior of individual tasks. This design enables better coordination and improved robustness during multi-model joint optimization. Extensive experiments in both the digital and physical domains demonstrate that the proposed method significantly enhances the adversarial transferability of patches across various object detectors and achieves strong attack performance in deployments under real-world physical domain.

-

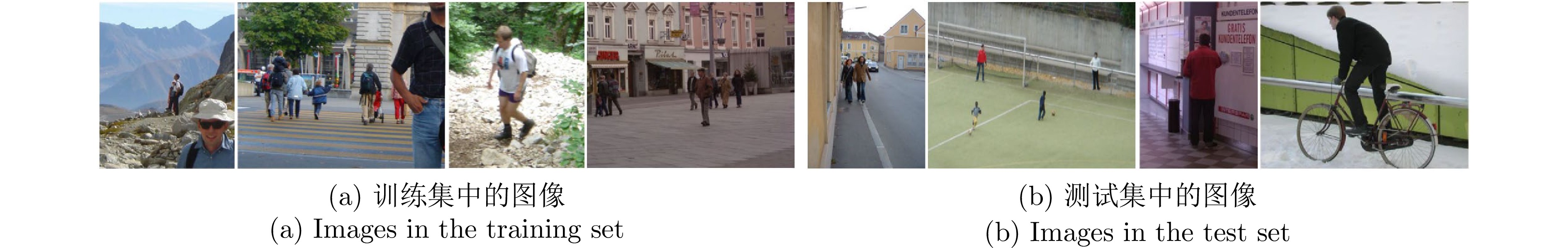

表 1 在InriaPerson数据集上攻击性能的定量比较 (%)

Table 1 Quantitative comparison of attack performance on the InriaPerson dataset (%)

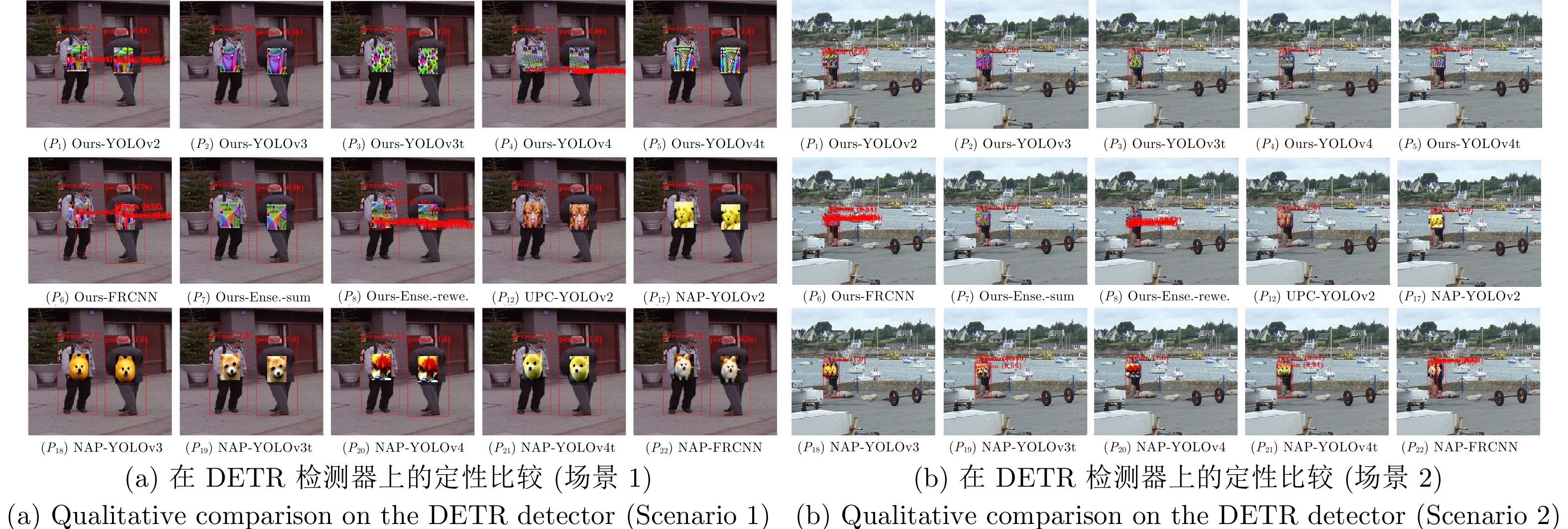

方法 YOLOv2 YOLOv3 YOLOv3-tiny YOLOv4 YOLOv4-tiny Faster R-CNN DETR (P1) Ours-YOLOv2 2.68 22.51 8.74 12.89 4.74 39.41 22.05 (P2) Ours-YOLOv3 36.47 15.07 66.20 44.39 39.85 57.38 38.84 (P3) Ours-YOLOv3-tiny 57.61 67.81 6.79 58.74 44.45 64.52 46.97 (P4) Ours-YOLOv4 28.33 31.71 44.35 4.37 30.74 44.11 33.14 (P5) Ours-YOLOv4-tiny 58.46 63.11 44.23 51.15 9.88 70.18 46.86 (P6) Ours-Faster R-CNN 7.55 7.09 10.27 10.89 10.18 18.47 18.19 (P7) Ours-Ensemble-sum 12.27 14.98 43.70 16.49 27.10 41.35 38.89 (P8) Ours-Ensemble-reweight 5.90 1.86 3.01 3.94 6.81 15.38 8.89 (P9) Gray Patch 72.66 74.17 67.52 66.52 60.93 61.54 52.86 (P10) White Patch 69.63 74.93 66.45 72.48 65.13 65.40 46.52 (P11) Random Noise 75.03 73.75 78.91 76.71 76.66 73.00 51.18 (P12) UPC-YOLOv2 48.62 54.40 63.82 64.21 63.03 61.87 47.58 (P13) DAP-YOLOv3 35.66 30.48 47.36 37.11 39.33 62.30 40.74 (P13) DAP-YOLOv3-tiny 59.19 58.73 6.99 41.62 30.42 70.43 48.41 (P14) DAP-YOLOv4 25.40 45.22 46.73 24.27 51.33 55.05 39.59 (P15) DAP-YOLOv4-tiny 23.90 47.54 23.08 50.62 12.99 60.00 37.08 (P16) NAP-YOLOv2 12.06 43.05 32.12 50.56 39.47 52.54 41.78 (P18) NAP-YOLOv3 56.67 34.93 41.46 56.29 53.59 61.78 43.17 (P19) NAP-YOLOv3-tiny 31.61 28.81 10.02 65.13 31.62 55.08 35.17 (P20) NAP-YOLOv4 44.27 56.59 56.61 22.63 58.23 59.42 44.76 (P21) NAP-YOLOv4-tiny 34.68 37.79 21.69 46.80 23.70 59.97 37.78 (P22) NAP-Faster R-CNN 28.26 39.05 37.06 51.46 28.68 42.47 45.09 注: 在下文的表格和图中, YOLOv3-tiny和YOLOv4-tiny分别简写为YOLOv3t和YOLOv4t, Faster R-CNN 简写为FRCNN, Ensemble-reweight 和Ensemble-sum分别简写为Ense.-rewe. 和Ense.-sum. 表 2 在COCO-Person数据集上的定量比较 (%)

Table 2 Quantitative comparison on the COCO-Person dataset (%)

方法 YOLOv2 YOLOv3 YOLOv3t YOLOv4 YOLOv4t FRCNN 针对性训练 7.41 30.92 12.82 15.22 17.40 27.13 Ours-Ense.-

sum18.80 25.13 37.43 23.82 27.87 40.87 Ours-Ense.-

rewe.9.84 6.09 11.02 11.01 14.79 24.17 表 3 在CCTV-Person数据集上的定量比较 (%)

Table 3 Quantitative comparison on the CCTV-Person dataset (%)

方法 YOLOv2 YOLOv3 YOLOv3t YOLOv4 YOLOv4t FRCNN 针对性训练 0.82 8.14 4.43 0.43 5.95 17.16 Ours-Ense.-

sum9.41 7.42 39.20 2.60 21.76 37.89 Ours-Ense.-

rewe.2.89 0.50 2.88 0.26 4.00 12.20 表 4 消融实验的定量比较(%)

Table 4 Quantitative comparison of ablation experiments (%)

方法 YOLOv2 YOLOv3 YOLOv3t YOLOv4 YOLOv4t FRCNN Only with $ g^k $ 19.36 18.05 34.99 24.87 27.69 38.37 Only with $ l^k $ 10.98 19.46 41.58 21.98 16.47 42.37 Ours-Ense.-

rewe.5.90 1.86 3.01 3.94 6.81 15.38 表 5 典型防御模型下的攻击性能定量评估 (%)

Table 5 Quantitative evaluation of attack performance under typical defense models (%)

方法 Ours-FRCNN Ours-Ense.-sum Ours-Ense.-rewe. No defense 18.47 41.35 15.38 Oddefense 30.41 44.66 18.16 PBCAT 37.94 55.57 24.73 方法 Ours-YOLOv3 Ours-Ense.-sum Ours-Ense.-rewe. No defense 15.07 14.98 1.86 FNS 30.13 29.98 13.16 方法 Ours-YOLOv3t Ours-Ense.-sum Ours-Ense.-rewe. No defense 6.79 43.70 3.01 FNS 6.96 47.45 4.59 表 6 与迁移性攻击方法DAS和AdaEA的定量比较 (%)

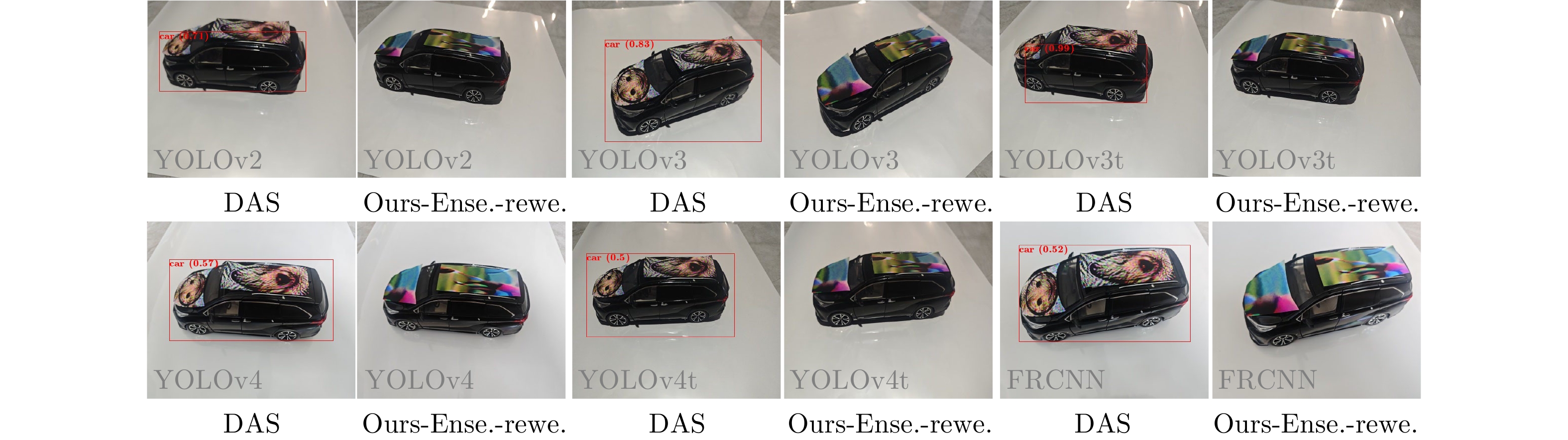

Table 6 Quantitative comparison with transferable attack methods DAS and AdaEA (%)

方法 YOLOv2 YOLOv3 YOLOv3t YOLOv4 YOLOv4t FRCNN DAS 37.32 35.34 2.51 32.92 1.16 39.09 Ours 33.05 30.97 1.32 25.20 0.68 32.95 $ \Delta $ 4.27 $ \downarrow $ 4.37 $ \downarrow $ 1.19 $ \downarrow $ 7.72 $ \downarrow $ 0.48 $ \downarrow $ 6.14 $ \downarrow $ AdaEA 12.81 2.68 5.57 4.09 5.28 34.01 Ours 5.90 1.86 3.01 3.94 6.81 15.38 $ \Delta $ 6.91 $ \downarrow $ 0.82 $ \downarrow $ 2.56 $ \downarrow $ 0.15 $ \downarrow $ −1.53 $ \downarrow $ 18.63 $ \downarrow $ 表 7 在物理域视频上攻击性能的定量比较(%)

Table 7 Quantitative comparison of attack performance on physical-domain videos (%)

方法 YOLOv2 YOLOv3 YOLOv3t YOLOv4 YOLOv4t FRCNN Ours-Ense.-sum 49.62 33.56 47.32 34.00 48.16 33.60 Ours-Ense.-rewe. 32.94 17.70 19.55 22.60 35.10 16.78 $ \Delta $ 16.68 $ \downarrow $ 15.86 $ \downarrow $ 27.77 $ \downarrow $ 11.40 $ \downarrow $ 13.06 $ \downarrow $ 16.82 $ \downarrow $ -

[1] Liu C, Dong Y P, Xiang W Z, Yang X, Su H, Zhu J, et al. A comprehensive study on robustness of image classification models: Benchmarking and rethinking. International Journal of Computer Vision, 2025, 133(2): 567−589 doi: 10.1007/s11263-024-02196-3 [2] Xiao C, An W, Zhang Y F, Su Z, Li M, Sheng W D, et al. Highly efficient and unsupervised framework for moving object detection in satellite videos. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024, 46(12): 11532−11539 doi: 10.1109/TPAMI.2024.3409824 [3] Zhou T F, Wang W G. Prototype-based semantic segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024, 46(10): 6858−6872 doi: 10.1109/TPAMI.2024.3387116 [4] Minaee S, Boykov Y, Porikli F, Plaza A, Kehtarnavaz N, Terzopoulos D. Image segmentation using deep learning: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022, 44(7): 3523−3542 [5] Yi X P, Tang L F, Zhang H, Xu H, Ma J Y. Diff-IF: Multi-modality image fusion via diffusion model with fusion knowledge prior. Information Fusion, 2024, 110: Article No. 102450 doi: 10.1016/j.inffus.2024.102450 [6] Masana M, Liu X L, Twardowski B, Menta M, Bagdanov A D, van de Weijer J. Class-incremental learning: Survey and performance evaluation on image classification. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2023, 45(5): 5513−5533 doi: 10.1109/TPAMI.2022.3213473 [7] Yi X P, Ma Y, Li Y S, Xu H, Ma J Y. Artificial intelligence facilitates information fusion for perception in complex environments. The Innovation, 2025, 6(4): Article No. 100814 doi: 10.1016/j.xinn.2025.100814 [8] Bär A, Houlsby N, Dehghani M, Kumar M. Frozen feature augmentation for few-shot image classification. In: Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR). Seattle, USA: IEEE, 2024. 16046−16057 [9] Yu Y, Da F P. On boundary discontinuity in angle regression based arbitrary oriented object detection. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024, 46(10): 6494−6508 doi: 10.1109/TPAMI.2024.3378777 [10] Sung C, Kim W, An J, Lee W, Lim H, Myung H. Contextrast: Contextual contrastive learning for semantic segmentation. In: Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR). Seattle, USA: IEEE, 2024. 3732−3742 [11] 陈晋音, 沈诗婧, 苏蒙蒙, 郑海斌, 熊晖. 车牌识别系统的黑盒对抗攻击. 自动化学报, 2021, 47(1): 121−135Chen Jin-Yin, Shen Shi-Jing, Su Meng-Meng, Zheng Hai-Bin, Xiong Hui. Black-box adversarial attack on license plate recognition system. Acta Automatica Sinica, 2021, 47(1): 121−135 [12] 王璐瑶, 曹渊, 刘博涵, 曾恩, 刘坤, 夏元清. 时间序列分类模型的集成对抗训练防御方法. 自动化学报, 2025, 51(1): 144−160 doi: 10.16383/j.aas.c240050Wang Lu-Yao, Cao Yuan, Liu Bo-Han, Zeng En, Liu Kun, Xia Yuan-Qing. Ensemble adversarial training defense for time series classification models. Acta Automatica Sinica, 2025, 51(1): 144−160 doi: 10.16383/j.aas.c240050 [13] 徐昌凯, 冯卫栋, 张淳杰, 郑晓龙, 张辉, 王飞跃. 针对身份证文本识别的黑盒攻击算法研究. 自动化学报, 2024, 50(1): 103−120 doi: 10.16383/j.aas.c230344Xu Chang-Kai, Feng Wei-Dong, Zhang Chun-Jie, Zheng Xiao-Long, Zhang Hui, Wang Fei-Yue. Research on black-box attack algorithm by targeting ID card text recognition. Acta Automatica Sinica, 2024, 50(1): 103−120 doi: 10.16383/j.aas.c230344 [14] Xiang X Y, Yan Q L, Zhang H, Ding J F, Xu H, Wang Z Y, et al. Cross-modal stealth: A coarse-to-fine attack framework for RGB-T tracker. In: Proceedings of the 39th AAAI Conference on Artificial Intelligence. Philadelphia, USA: AAAI, 2025. 8620−8627 [15] Wei H, Tang H, Jia X M, Wang Z X, Yu H X, Li Z B, et al. Physical adversarial attack meets computer vision: A decade survey. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024, 46(12): 9797−9817 doi: 10.1109/TPAMI.2024.3430860 [16] Li H, Dang K L, Gong M G, Qin A K, Zhou Y, Wu Y, et al. Sparse unmixing guided adversarial attack for hyperspectral image classification. IEEE Transactions on Circuits and Systems for Video Technology, 2026, 36(2): 2318−2331 doi: 10.1109/TCSVT.2025.3602604 [17] Jia S, Yin B J, Yao T P, Ding S H, Shen C H, Yang X K, et al. Adv-attribute: Inconspicuous and transferable adversarial attack on face recognition. In: Proceedings of the 36th International Conference on Neural Information Processing Systems (NeurIPS). New Orleans, USA: Curran Associates Inc., 2022. Article No. 2474 [18] Eykholt K, Evtimov I, Fernandes E, Li B, Rahmati A, Xiao C W, et al. Robust physical-world attacks on deep learning visual classification. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Salt Lake City, USA: IEEE, 2018. 1625−1634 [19] Finlayson S G, Bowers J D, Ito J, Zittrain J L, Beam A L, Kohane I S. Adversarial attacks on medical machine learning. Science, 2019, 363(6433): 1287−1289 doi: 10.1126/science.aaw4399 [20] Gu J D, Zhao H S, Tresp V, Torr P H S. SegPGD: An effective and efficient adversarial attack for evaluating and boosting segmentation robustness. In: Proceedings of the 17th European Conference on Computer Vision (ECCV). Tel Aviv, Israel: Springer, 2022. 308−325 [21] Su J W, Vargas D V, Sakurai K. One pixel attack for fooling deep neural networks. IEEE Transactions on Evolutionary Computation, 2019, 23(5): 828−841 doi: 10.1109/TEVC.2019.2890858 [22] Wei X X, Yan H Q, Li B. Sparse black-box video attack with reinforcement learning. International Journal of Computer Vision, 2022, 130(6): 1459−1473 doi: 10.1007/s11263-022-01604-w [23] Wei X X, Zhu J, Yuan S, Su H. Sparse adversarial perturbations for videos. In: Proceedings of the 33rd AAAI Conference on Artificial Intelligence. Honolulu, USA: AAAI, 2019. 8973−8980 [24] Thys S, van Ranst W, Goedemé T. Fooling automated surveillance cameras: Adversarial patches to attack person detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW). Long Beach, USA: IEEE, 2019. 49−55 [25] Wei X X, Guo Y, Yu J. Adversarial sticker: A stealthy attack method in the physical world. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2023, 45(3): 2711−2725 doi: 10.1109/tpami.2022.3176760 [26] Xiang X Y, Yan Q L, Zhang H, Ma J Y. ACAttack: Adaptive cross attacking RGB-T tracker via multi-modal response decoupling. In: Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR). Nashville, USA: IEEE, 2025. 22099−22108 [27] Zhu X P, Hu Z H, Huang S Y, Li J M, Hu X L. Infrared invisible clothing: Hiding from infrared detectors at multiple angles in real world. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). New Orleans, USA: IEEE, 2022. 13307−13316 [28] Hu Y C T, Chen J C, Kung B H, Hua K L, Tan D S. Naturalistic physical adversarial patch for object detectors. In: Proceedings of the IEEE International Conference on Computer Vision (ICCV). Montreal, Canada: IEEE, 2021. 7828−7837 [29] Zhu X P, Li X, Li J M, Wang Z Y, Hu X L. Fooling thermal infrared pedestrian detectors in real world using small bulbs. In: Proceedings of the 35th AAAI Conference on Artificial Intelligence. Virtual Event: AAAI, 2021. 3616−3624 [30] Yu T H, Kumar S, Gupta A, Levine S, Hausman K, Finn C. Gradient surgery for multi-task learning. In: Proceedings of the 34th International Conference on Neural Information Processing Systems (NeurIPS). Vancouver, Canada: Curran Associates Inc., 2020. Article No. 489 [31] Guo M, Haque A, Huang D A, Yeung S, Li F F. Dynamic task prioritization for multitask learning. In: Proceedings of the 15th European Conference on Computer Vision (ECCV). Munich, Germany: Springer, 2018. 282−299 [32] Liu S K, Johns E, Davison A J. End-to-end multi-task learning with attention. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Long Beach, USA: IEEE, 2019. 1871−1880 [33] Wu G, Jiang J J, Jiang K, Liu X M. Harmony in diversity: Improving all-in-one image restoration via multi-task collaboration. In: Proceedings of the 32nd ACM International Conference on Multimedia (MM). Melbourne, Australia: ACM, 2024. 6015−6023 [34] Chen B, Yin J L, Chen S K, Chen B H, Liu X M. An adaptive model ensemble adversarial attack for boosting adversarial transferability. In: Proceedings of the IEEE International Conference on Computer Vision (ICCV). Paris, France: IEEE, 2023. 4466−4475 [35] Tang B W, Wang Z, Bin Y, Dou Q, Yang Y, Shen H T. Ensemble diversity facilitates adversarial transferability. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Seattle, USA: IEEE, 2024. 24377−24386 [36] Wang Z B, Guo H C, Zhang Z F, Liu W X, Qin Z, Ren K. Feature importance-aware transferable adversarial attacks. In: Proceedings of the IEEE International Conference on Computer Vision (ICCV). Montreal, Canada: IEEE, 2021. 7619−7628 [37] Chen J Q, Chen H, Chen K Y, Zhang Y L, Zou Z X, Shi Z W. Diffusion models for imperceptible and transferable adversarial attack. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2025, 47(2): 961−977 doi: 10.1109/TPAMI.2024.3480519 [38] Li Z X, Yin B J, Yao T P, Guo J F, Ding S H, Chen S M, et al. Sibling-attack: Rethinking transferable adversarial attacks against face recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Vancouver, Canada: IEEE, 2023. 24626−24637 [39] Guo Y, Liu W Q, Xu Q S, Zheng S J, Huang S J, Zang Y, et al. Boosting adversarial transferability through augmentation in hypothesis space. In: Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR). Nashville, USA: IEEE, 2025. 19175−19185 [40] Li Y B, Hu C, Wu X J. Transferable stealthy adversarial example generation via dual-latent adaptive diffusion for facial privacy protection. IEEE Transactions on Information Forensics and Security, 2025, 20: 9427−9440 doi: 10.1109/TIFS.2025.3607244 [41] Goodfellow I J, Shlens J, Szegedy C. Explaining and harnessing adversarial examples. In: Proceedings of the 3rd International Conference on Learning Representations (ICLR). San Diego, USA: ICLR, 2015. [42] Madry A, Makelov A, Schmidt L, Tsipras D, Vladu A. Towards deep learning models resistant to adversarial attacks. In: Proceedings of the 6th International Conference on Learning Representations (ICLR). Vancouver, Canada: ICLR, 2018. 1−23 [43] Sharif M, Bhagavatula S, Bauer L, Reiter M K. Accessorize to a crime: Real and stealthy attacks on state-of-the-art face recognition. In: Proceedings of the ACM SIGSAC Conference on Computer and Communications Security (CCS). Vienna, Austria: ACM, 2016. 1528−1540 [44] Xu K D, Zhang G Y, Liu S J, Fan Q F, Sun M S, Chen H G, et al. Adversarial T-shirt! Evading person detectors in a physical world. In: Proceedings of the 16th European Conference on Computer Vision (ECCV). Glasgow, UK: Springer, 2020. 665−681 [45] Tan J, Ji N, Xie H D, Xiang X S. Legitimate adversarial patches: Evading human eyes and detection models in the physical world. In: Proceedings of the 29th ACM International Conference on Multimedia (MM). Chengdu, China: ACM, 2021. 5307−5315 [46] Yin B J, Wang W X, Yao T P, Guo J F, Kong Z L, Ding S H, et al. Adv-Makeup: A new imperceptible and transferable attack on face recognition. In: Proceedings of the 30th International Joint Conference on Artificial Intelligence (IJCAI). Montreal, Canada: IJCAI.org, 2021. 1252−1258 [47] Szegedy C, Zaremba W, Sutskever I, Bruna J, Erhan D, Goodfellow I J, et al. Intriguing properties of neural networks. In: Proceedings of the 2nd International Conference on Learning Representations (ICLR). Banff, Canada: ICLR, 2014. [48] Brown T B, Mané D, Roy A, Abadi M, Gilmer J. Adversarial patch. In: Proceedings of the 31st International Conference on Neural Information Processing Systems (NeurIPS). Long Beach, USA: Curran Associates Inc., 2017. 1−5 [49] Xue H T, Araujo A, Hu B, Chen Y X. Diffusion-based adversarial sample generation for improved stealthiness and controllability. In: Proceedings of the 37th International Conference on Neural Information Processing Systems (NeurIPS). New Orleans, USA: Curran Associates Inc., 2023. Article No. 129 [50] Rony J, Pesquet J C, Ben Ayed I. Proximal splitting adversarial attack for semantic segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Vancouver, Canada: IEEE, 2023. 20524−20533 [51] Wei H, Wang Z X, Zhang K W, Hou J Q, Liu Y W, Tang H, et al. Revisiting adversarial patches for designing camera-agnostic attacks against person detection. In: Proceedings of the 38th International Conference on Neural Information Processing Systems (NeurIPS). Vancouver, Canada: Curran Associates Inc., 2024. 8047−8064 [52] Redmon J, Farhadi A. YOLO9000: Better, faster, stronger. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Honolulu, USA: IEEE, 2017. 6517−6525 [53] Redmon J, Farhadi A. YOLOv3: An incremental improvement. arXiv preprint arXiv: 1804.02767, 2018. [54] Bochkovskiy A, Wang C Y, Liao H Y M. YOLOv4: Optimal speed and accuracy of object detection. arXiv preprint arXiv: 2004.10934, 2020. [55] Ren S Q, He K M, Girshick R, Sun J. Faster R-CNN: Towards real-time object detection with region proposal networks. In: Proceedings of the 29th International Conference on Neural Information Processing Systems (NeurIPS). Montreal, Canada: MIT Press, 2015. 91−99 [56] Carion N, Massa F, Synnaeve G, Usunier N, Kirillov A, Zagoruyko S. End-to-end object detection with transformers. In: Proceedings of the 16th European Conference on Computer Vision (ECCV). Glasgow, UK: Springer, 2020. 213−229 [57] Huang L F, Gao C Y, Zhou Y Y, Xie C H, Yuille A L, Zou C Q, et al. Universal physical camouflage attacks on object detectors. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Seattle, USA: IEEE, 2020. 717−726 [58] Guesmi A, Ding R T, Hanif M A, Alouani I, Shafique M. DAP: A dynamic adversarial patch for evading person detectors. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Seattle, USA: IEEE, 2024. 24595−24604 [59] Dalal N, Triggs B. Histograms of oriented gradients for human detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). San Diego, USA: IEEE, 2005. 886−893 [60] Li X, Chen H, Hu X L. On the importance of backbone to the adversarial robustness of object detectors. IEEE Transactions on Information Forensics and Security, 2025, 20: 2387−2398 doi: 10.1109/TIFS.2025.3542964 [61] Li X, Zhu Y M, Huang Y F, Zhang W, He Y Z, Shi J, et al. PBCAT: Patch-based composite adversarial training against physically realizable attacks on object detection. arXiv preprint arXiv: 2506.23581, 2025. [62] Yu C, Chen J S, Wang Y, Xue Y Z, Ma H M. Improving adversarial robustness against universal patch attacks through feature norm suppressing. IEEE Transactions on Neural Networks and Learning Systems, 2025, 36(1): 1410−1424 doi: 10.1109/TNNLS.2023.3326871 [63] Wang J K, Liu A S, Yin Z X, Liu S C, Tang S Y, Liu X L. Dual attention suppression attack: Generate adversarial camouflage in physical world. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Nashville, USA: IEEE, 2021. 8561−8570 -

下载:

下载: