RC-LIO: LiDAR-inertial Odometry Enhanced by Multi-sensor Fusion Compensation Under Degraded Environments

-

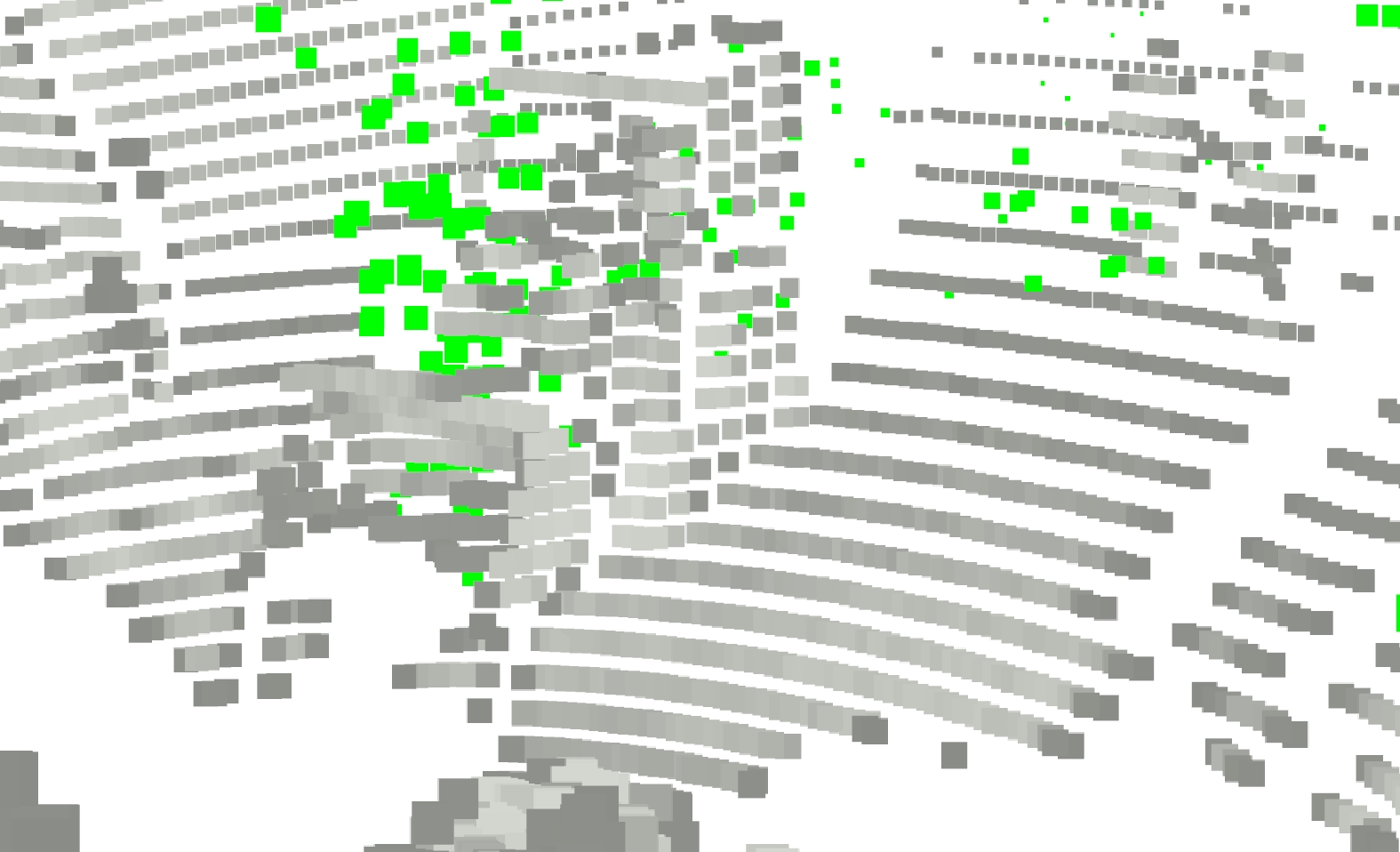

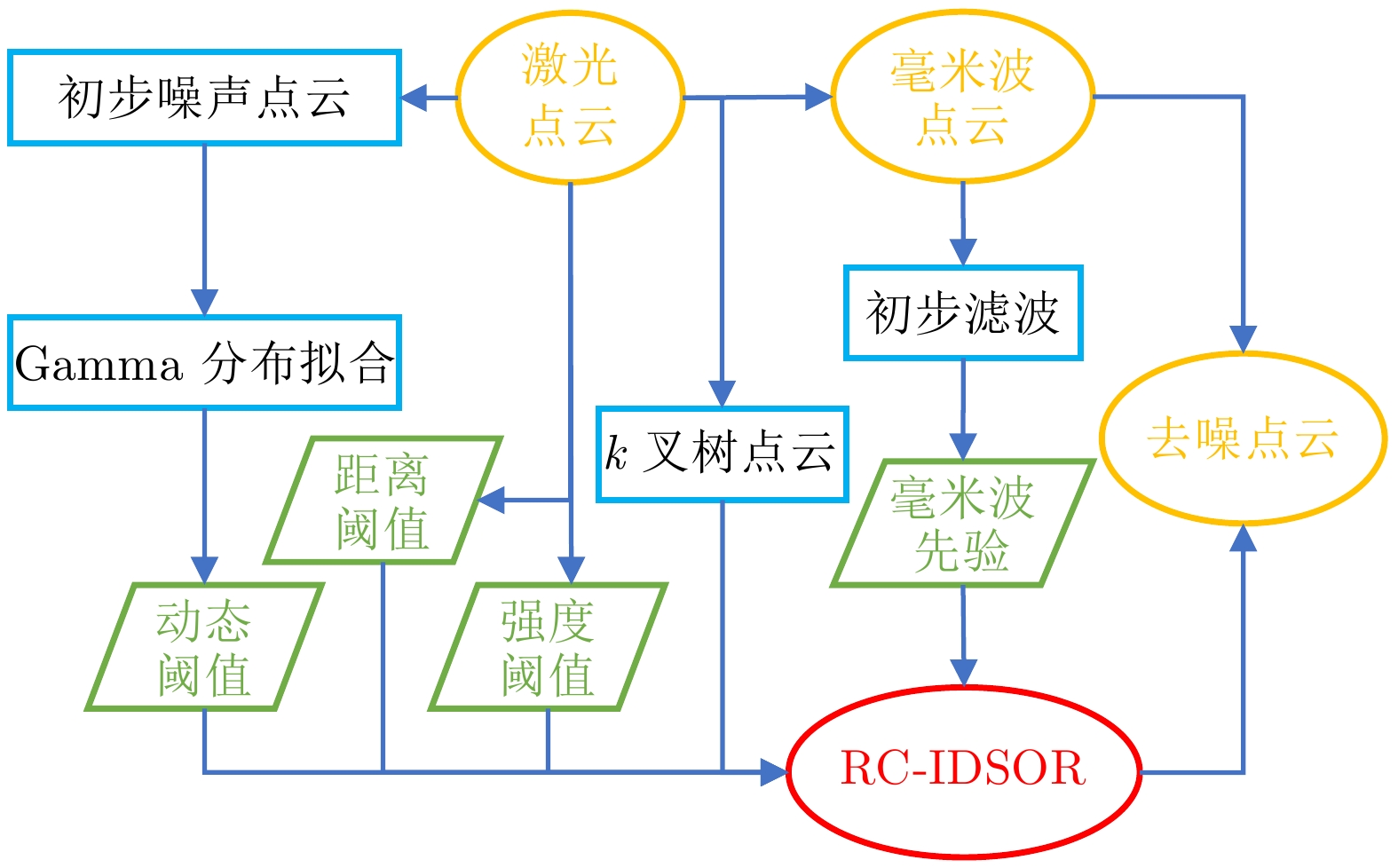

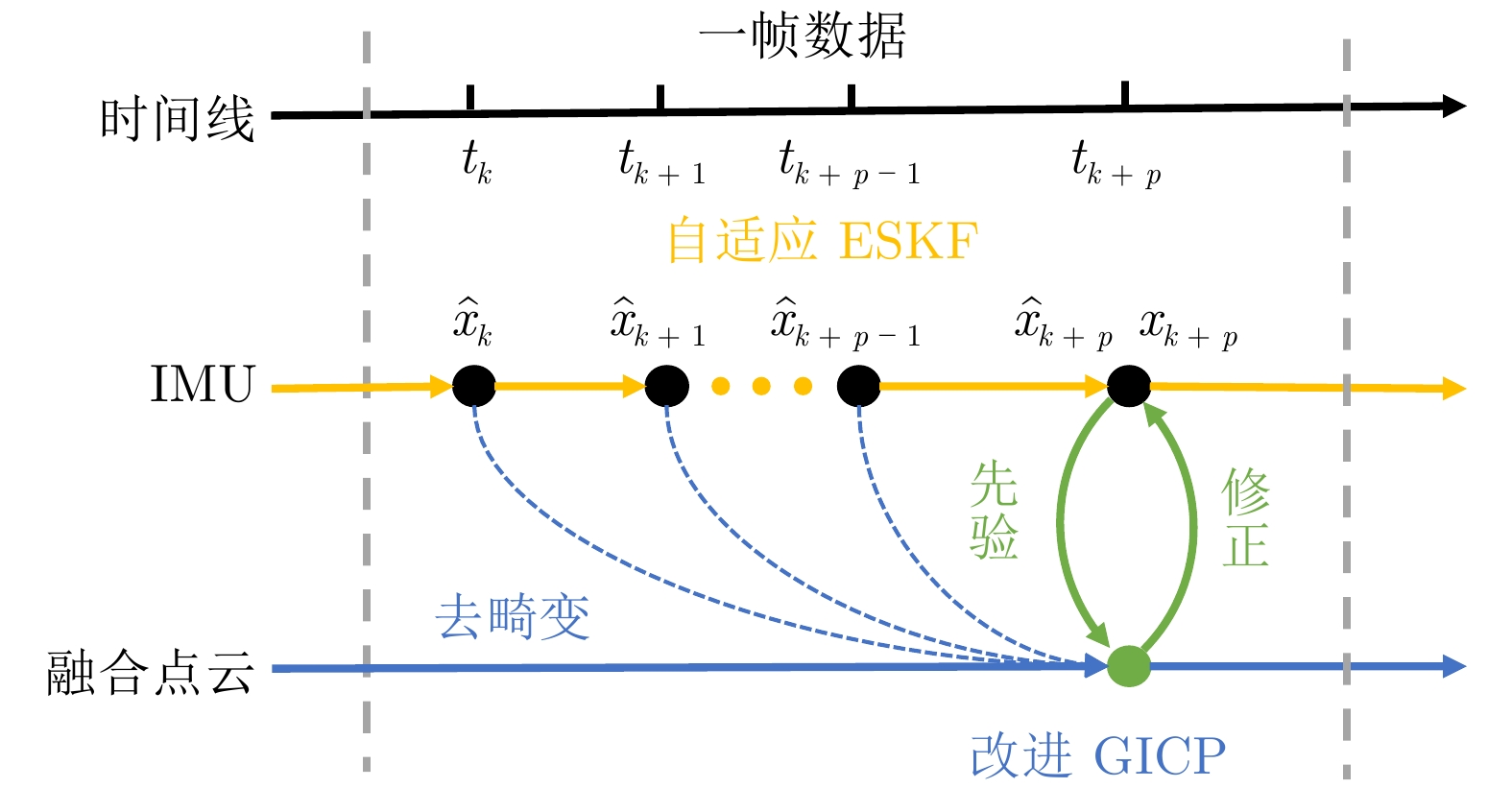

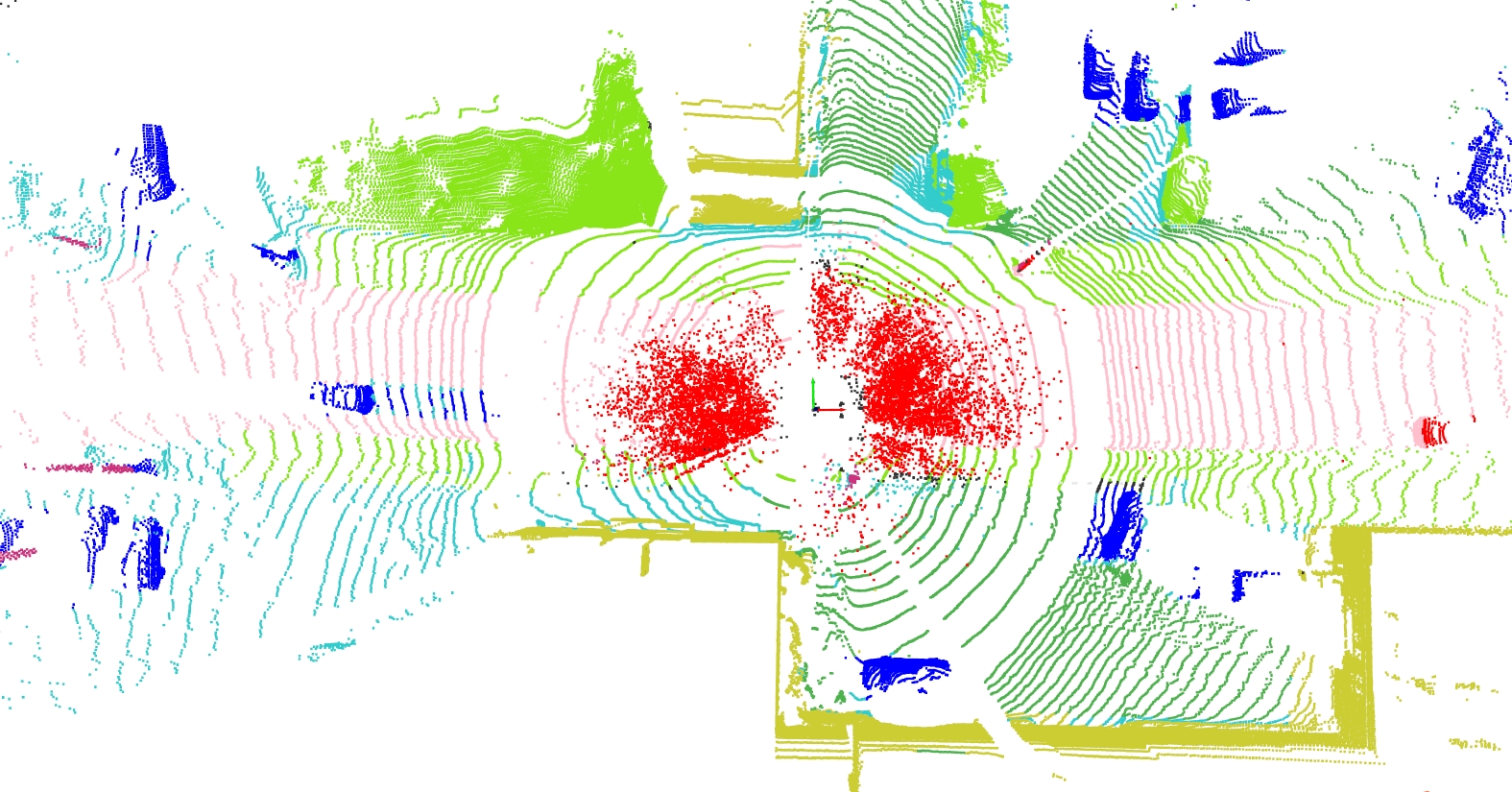

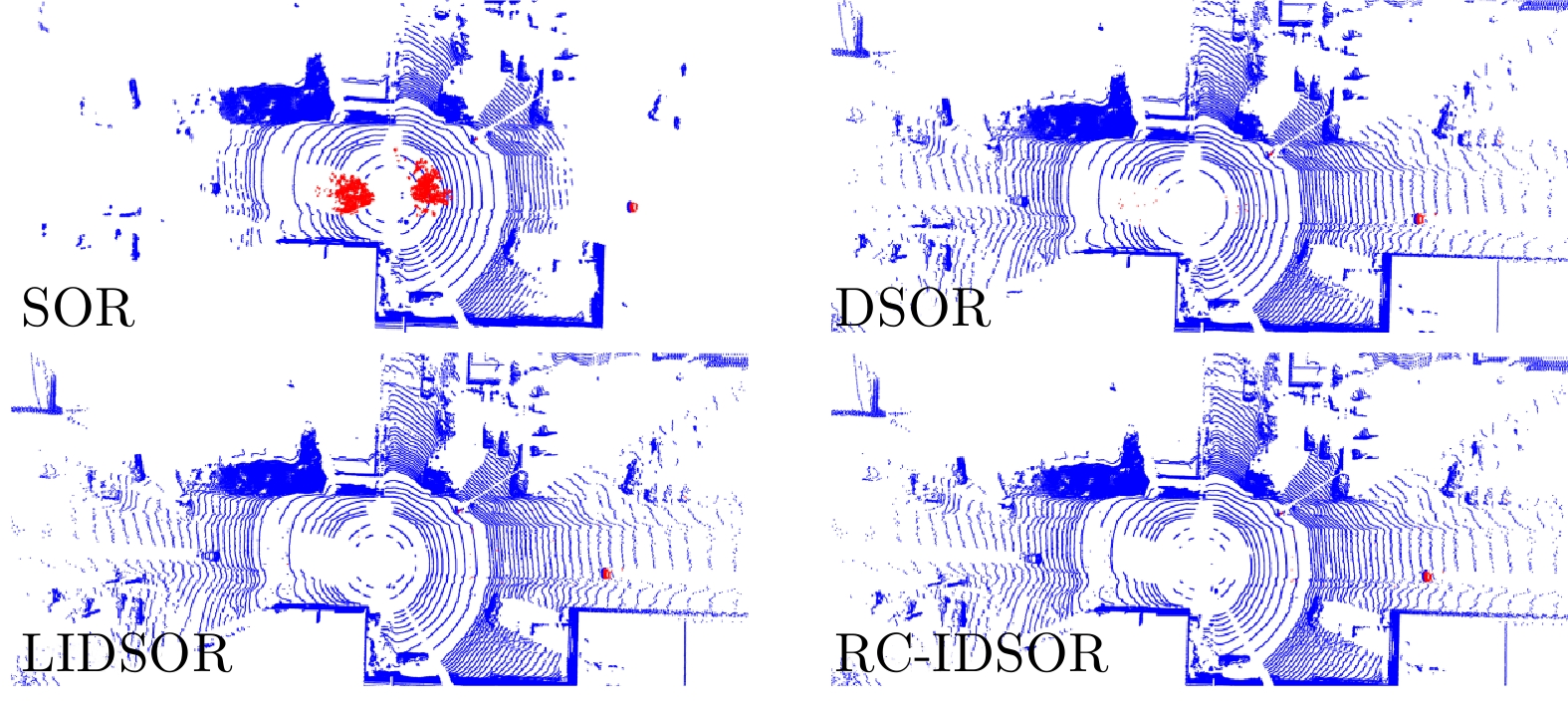

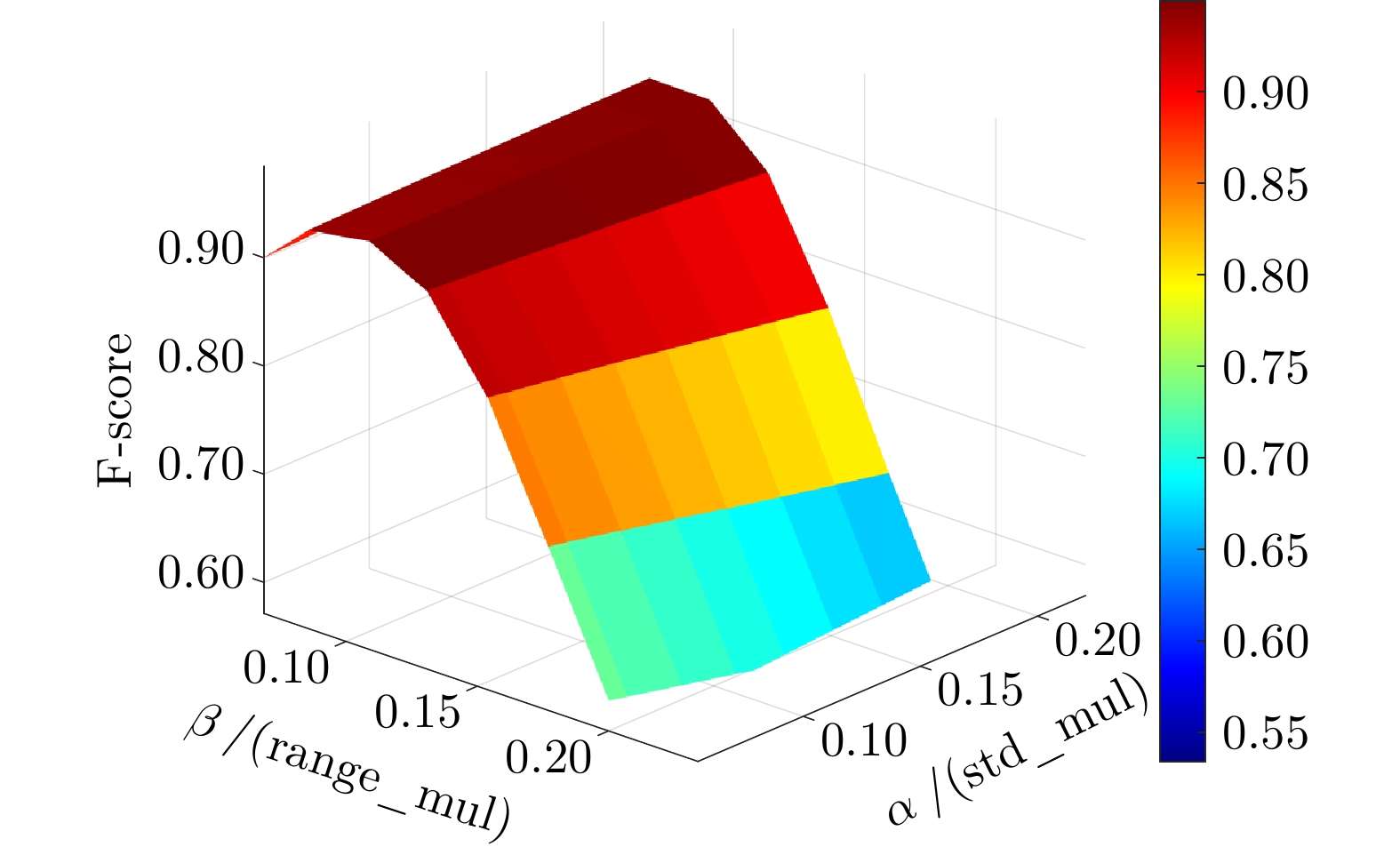

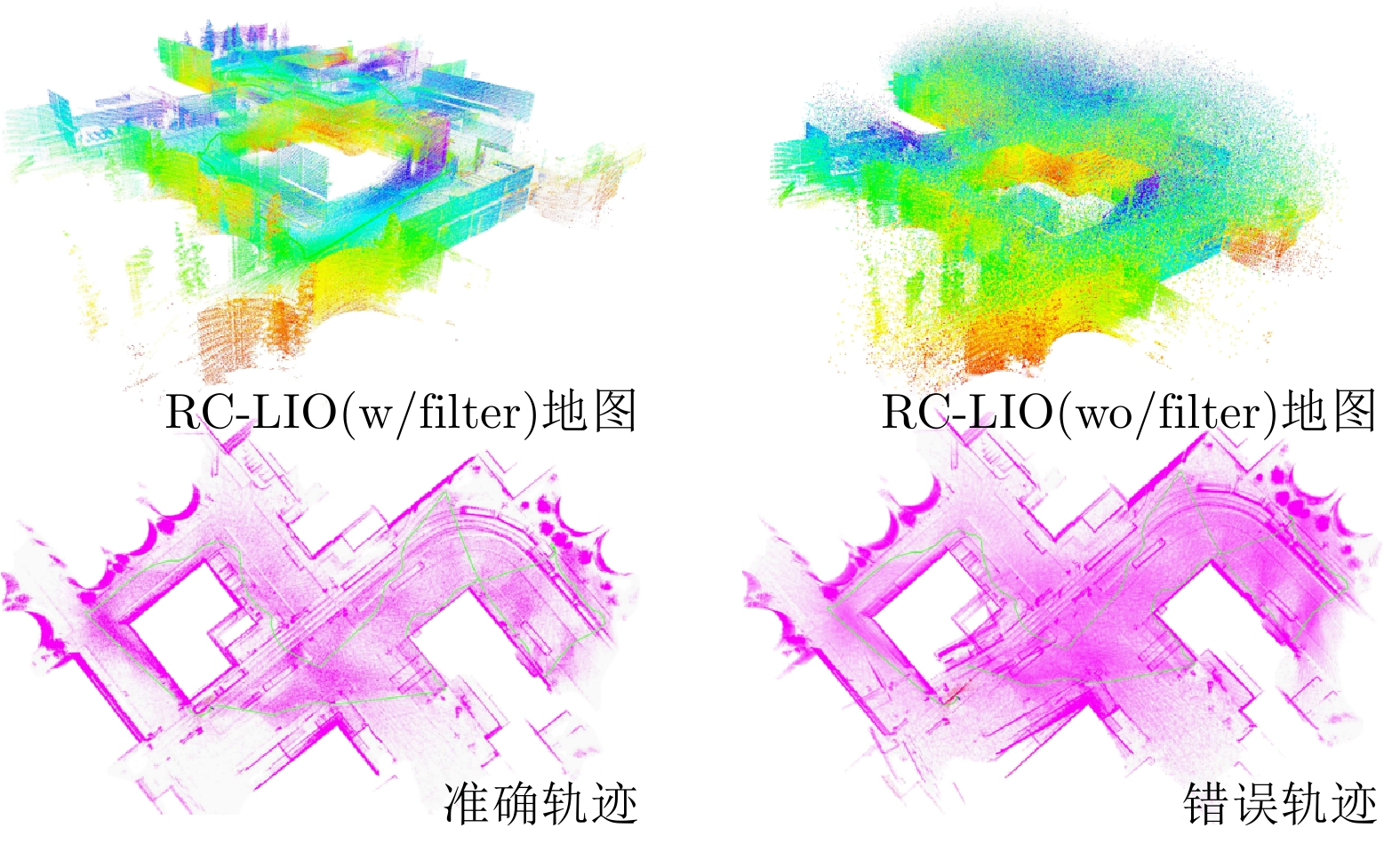

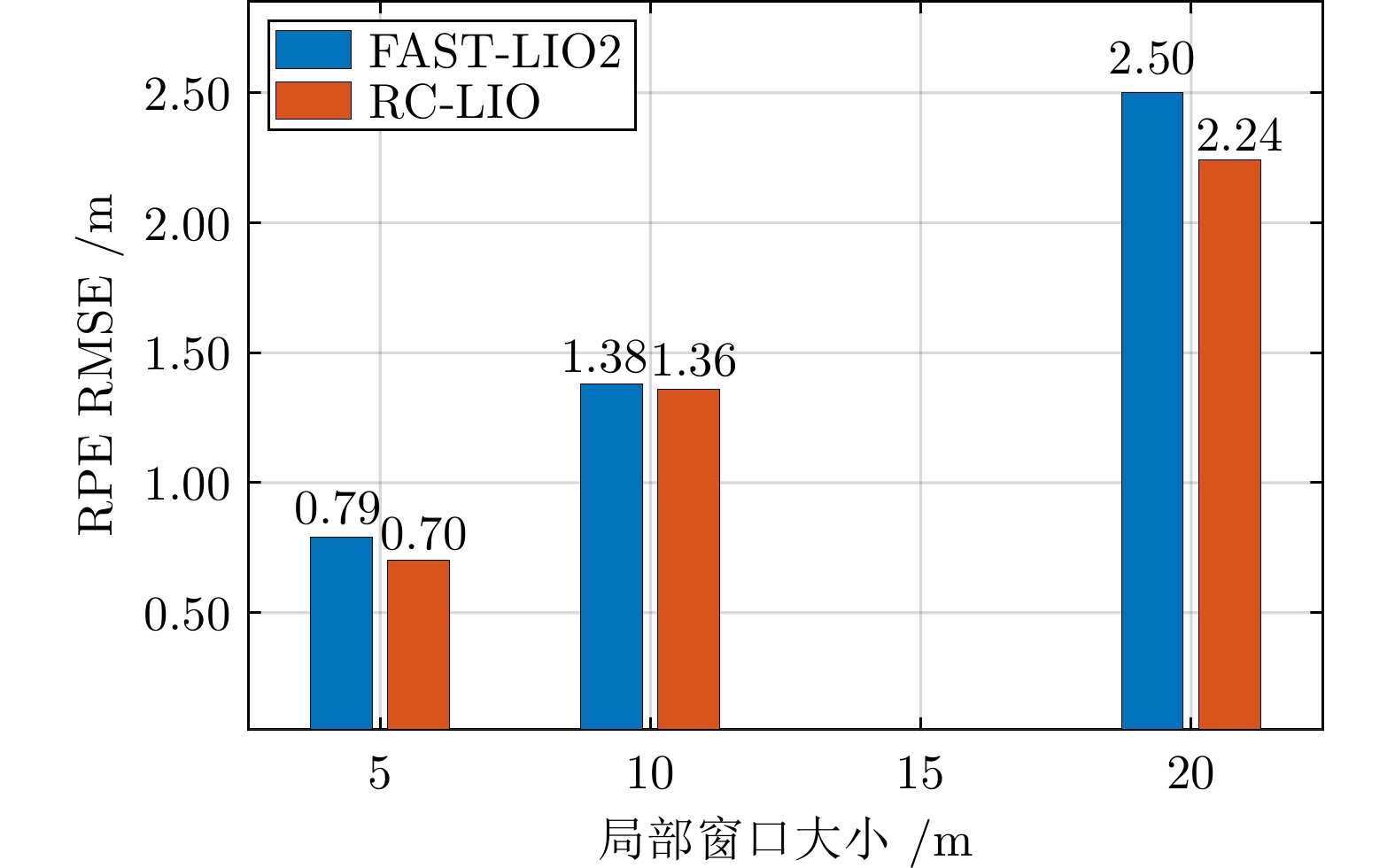

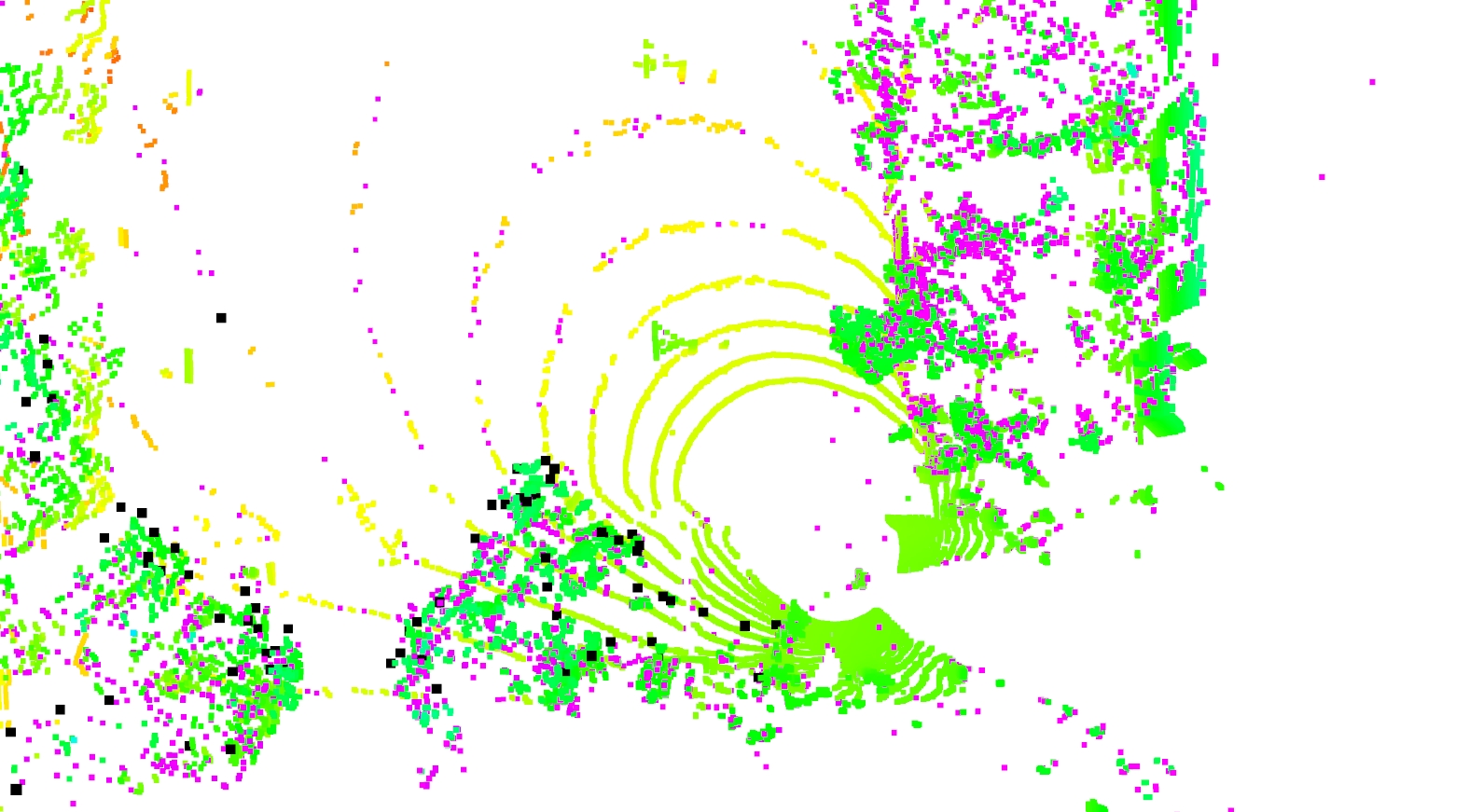

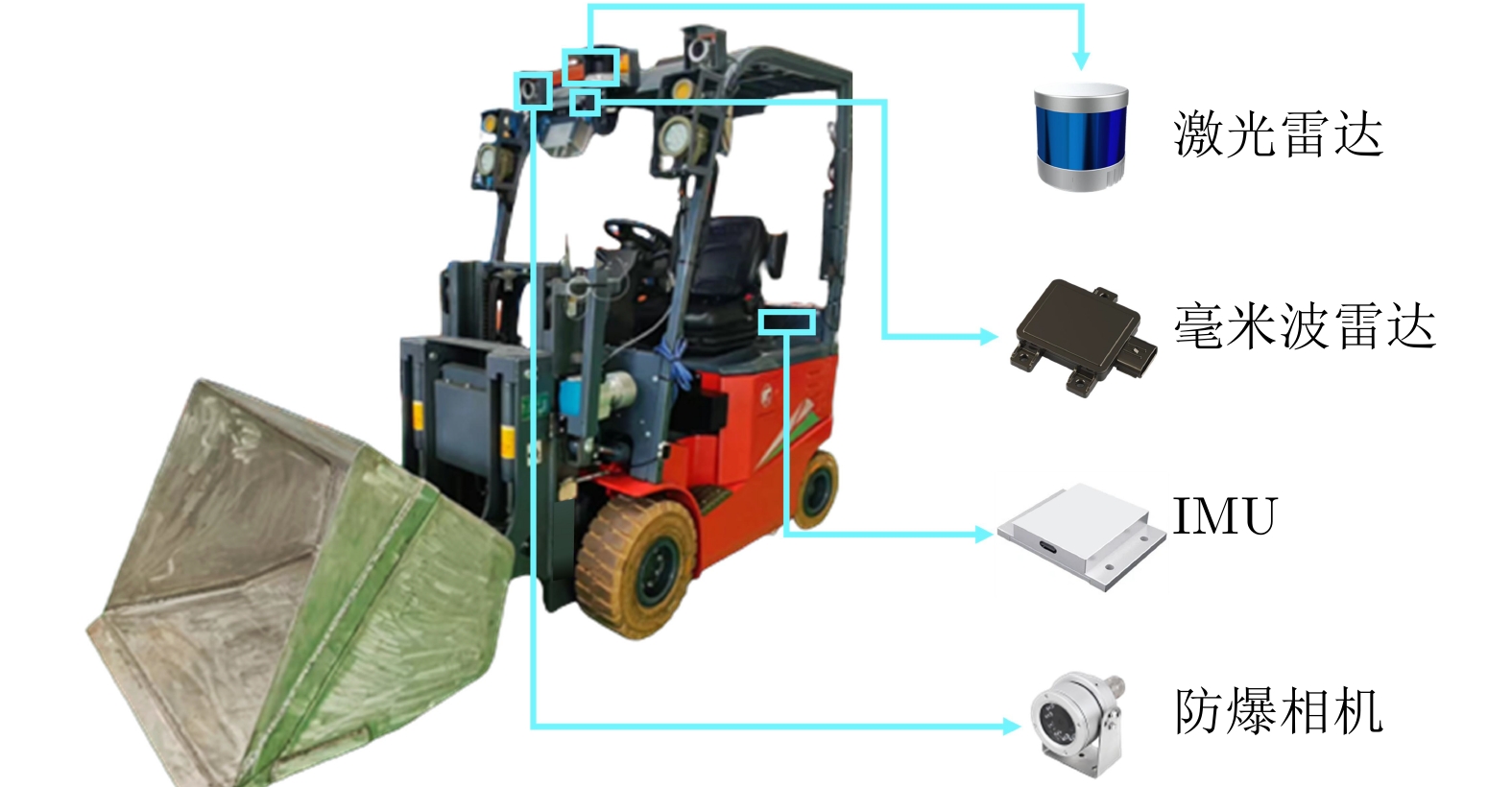

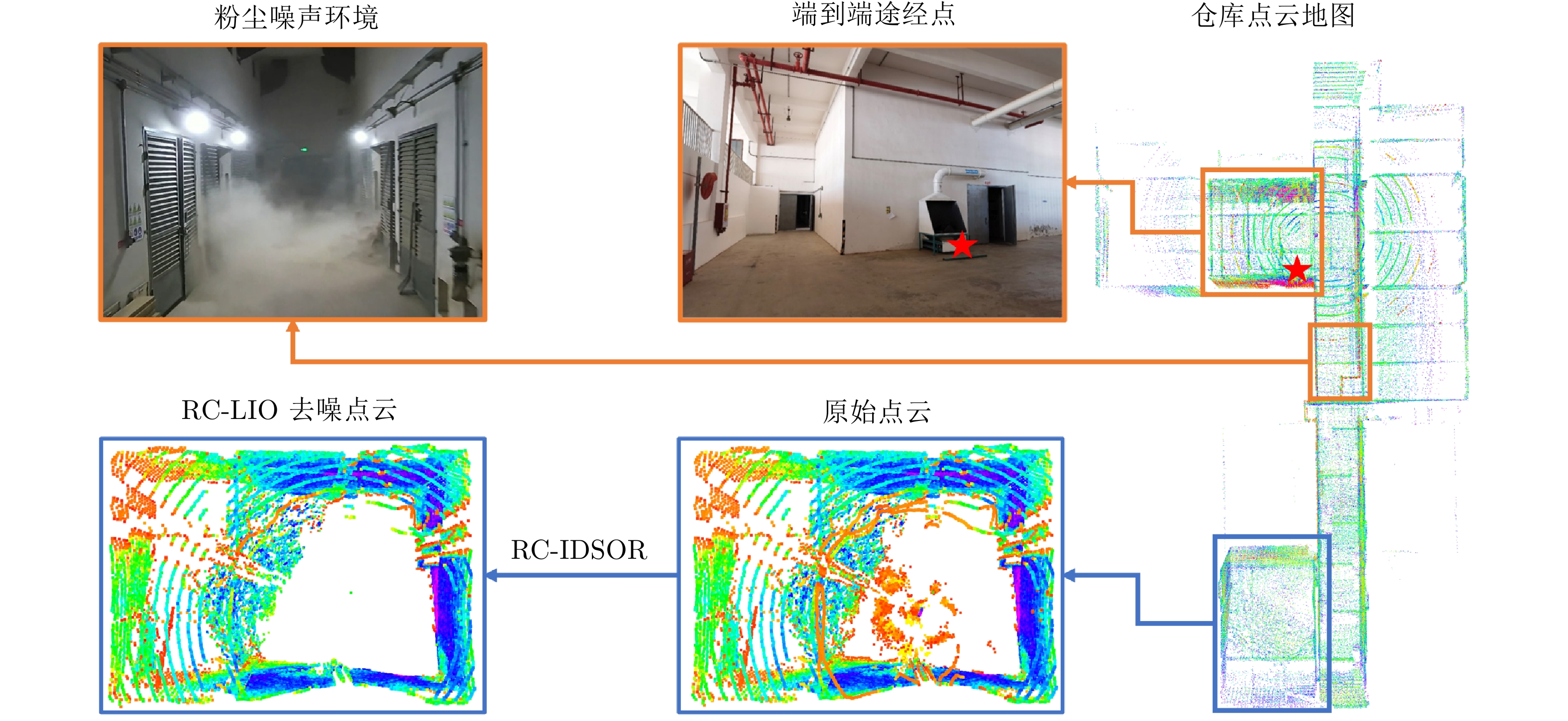

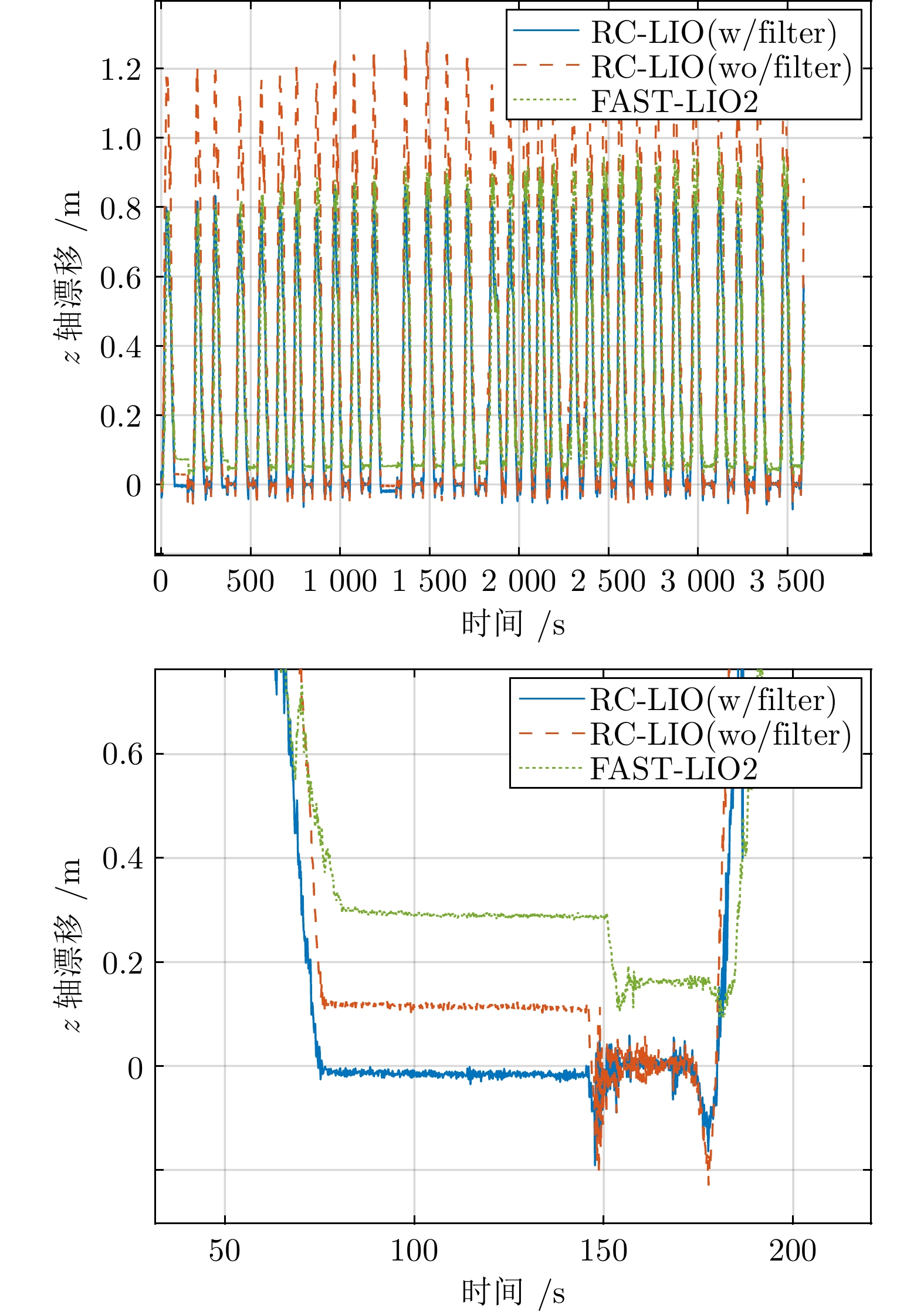

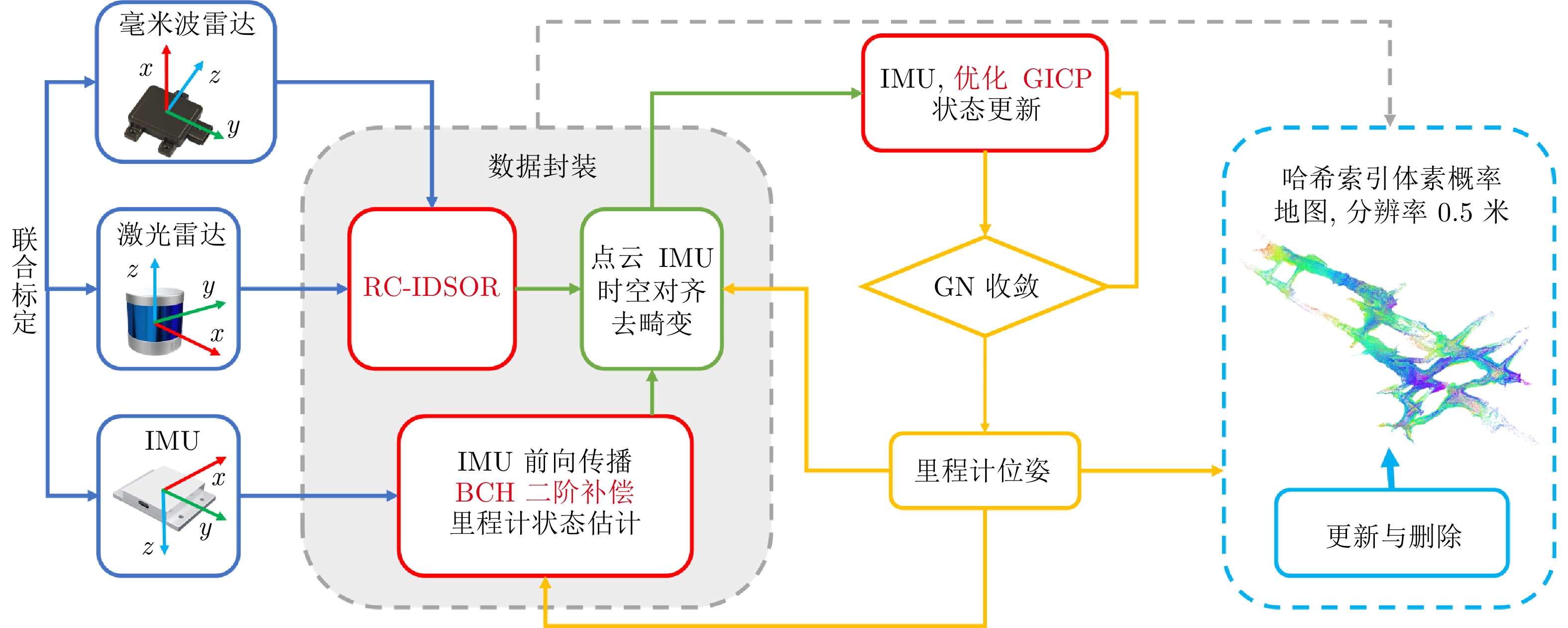

摘要: 基于激光雷达的同步定位与建图在移动机器人和自动驾驶中得到广泛应用, 但是在雨、雪和粉尘等退化环境中, 激光束易受颗粒物散射干扰产生大量噪点, 导致地图失真和定位漂移. 本文提出毫米波雷达补偿的强度动态统计离群值去除方法(RC-IDSOR), 以实时滤除激光噪点并保留环境结构特征. 进一步构建雷达补偿的激光雷达惯性里程计(RC-LIO): 一方面, 优化动态局部协方差与设计强度置信度加权机制, 提高广义ICP匹配的稳定性; 另一方面, 在误差状态卡尔曼滤波预测中添加二阶补偿项, 提升IMU在高动态场景下的传播精度. 实验结果显示, RC-IDSOR在WADS数据集上的平均F-score超过0.85, 精确度提升约6.8%; RC-LIO在SubT-MRS退化场景中的平均绝对轨迹误差约为0.33 m, 在Snail-Radar强降雨环境下的定位误差较不启用滤波降低约49.6%. 最后将RC-LIO部署于重粉尘环境工业车辆, 测试算法短时重复定位误差小于5.6 cm, 且支持长时稳定运行, 具备实时性和工程可行性.Abstract: LiDAR-based simultaneous localization and mapping (SLAM) has been widely applied in mobile robotics and autonomous driving. However, in degraded environments such as rain, snow, and dust, laser beams are easily disturbed by particle scattering, generating a large number of noise points, which leads to map distortion and localization drift. Thus, a millimeter-wave radar-compensated intensity-based dynamic statistical outlier removal method (RC-IDSOR) is proposed to filter laser noise points in real time while preserving environmental structural features. Furthermore, a radar-compensated LiDAR-inertial odometry (RC-LIO) is developed. First, a dynamic local covariance is optimized and an intensity confidence weighting mechanism is designed to improve the stability of generalized ICP matching. Then, a second-order compensation term is incorporated into the error-state Kalman filter prediction to enhance IMU propagation accuracy under highly dynamic scenes. Experimental results show that RC-IDSOR achieves an average F-score exceeding 0.85 on the WADS dataset, with a precision improvement of about 6.8%. RC-LIO attains an average absolute trajectory error of about 0.33 m in degraded SubT-MRS scenarios, and reduces localization error by about 49.6% in heavy-rain environments on the Snail-Radar dataset compared with non-filtering odometry. Finally, RC-LIO is deployed on industrial vehicles operating in heavy-dust environments, where experiments demonstrate short-term repeatability errors below 5.6 cm and stable long-term operation, validating its real-time performance and engineering feasibility.

-

表 1 WADS序列定量评估结果

Table 1 Quantitative evaluation results of WADS sequences

场景 方法 accuracy error precision recall F-score 11 SOR 0.7633 0.2367 0.1302 0.2881 0.1791 DSOR 0.9316 0.0684 0.6038 0.8208 0.6902 LIDSOR 0.9623 0.0377 0.7911 0.8056 0.7936 RC-IDSOR 0.9711 0.0289 0.8767 0.8022 0.8319 12 SOR 0.7277 0.2723 0.1312 0.2400 0.1668 DSOR 0.9366 0.0634 0.7693 0.7393 0.7395 LIDSOR 0.9539 0.0461 0.9039 0.7298 0.7952 RC-IDSOR 0.9566 0.0434 0.9346 0.7267 0.8061 13 SOR 0.7986 0.2014 0.1257 0.4375 0.1939 DSOR 0.9628 0.0372 0.6103 0.9822 0.7488 LIDSOR 0.9842 0.0158 0.7923 0.9776 0.8742 RC-IDSOR 0.9901 0.0099 0.8646 0.9756 0.9164 18 SOR 0.7983 0.2017 0.1571 0.5075 0.2386 DSOR 0.9458 0.0542 0.5528 0.9888 0.7041 LIDSOR 0.9829 0.0171 0.7951 0.9819 0.8777 RC-IDSOR 0.9903 0.0097 0.8794 0.9787 0.9261 表 2 不同里程计在SuBT-MRS数据集中的ATE (m)

Table 2 ATE of different odometries in the SuBT-MRS dataset (m)

场景 FAST-LIO2, HBA FAST-LIO, Pose Graph Point-LIO, Quatro LIO-EKF DLO, Scan-Context++ RC-LIO Urban 0.307 0.260 0.331 1.060 1.205 0.409 Tunnel 0.095 0.096 0.092 0.220 0.695 0.067 Cave 0.629 0.617 0.787 0.750 - 0.491 Nuclear-1 0.122 0.120 0.123 0.470 1.175 0.040 Nuclear-2 0.235 0.222 0.270 0.620 1.720 0.183 LaurelCaverns 0.260 0.402 0.279 9.140 2.080 0.269 Factory 0.889 0.998 10.628 4.920 0.889 0.540 Ocean 0.757 0.770 22.425 0.280 0.778 0.387 Sewerage 0.978 1.586 7.147 24.460 1.130 0.588 表 3 RC-LIO不同模式在Snail数据集中的ATE (m)

Table 3 ATE of different RC-LIO modes in the Snail-Radar Dataset (m)

激光/毫米波里程计 light rain rain heavy rain RC-LIO(w/RC-IDSOR) 0.1756 0.3726 1.2441 RC-LIO(w/LIDSOR) 0.1778 0.5648 1.8550 RC-LIO(wo/filter) 0.2064 0.4435 2.4664 4DRadarSLAM 1.6000 27.5000 37.8000 EKF-RIO 6.0000 58.3000 143.9000 4D-iRIOM 0.7000 12.6000 31.7000 表 4 作业车辆传感器配置参数

Table 4 Sensor configuration parameters of the work vehicle

传感器 型号 参数 激光雷达 禾赛PandarQT 76.8万点/秒, 104.2 °垂直视场角 毫米波雷达 纳雷SR75 4D成像, 120 °水平视场角 IMU 轮趣N100 200 Hz, 0.003 °/s陀螺仪噪声密度 工控机 米文AD10 NVIDIA Jetson AGX Orin(64 GB) 表 5 不同平台上RC-LIO实时性测试结果

Table 5 Real-time performance test results of RC-LIO on different platforms

平台 算法 CPU占用率(%) 内存占用(MB) IMU加去畸变耗时(ms) 点云匹配耗时(ms) 单线程总耗时(ms) RC-LIO(w/filter) 2.1 36.4 3.7 0.9 4.7 x86 FAST-LIO2 3.7 130.7 1.3 3.0 4.4 LIO-SAM 12.0 87.2 6.5 11.7 16.4 RC-LIO(w/filter) 6.3 78.4 21.6 4.1 26.4 ARM FAST-LIO2 3.7 167.5 3.5 19.9 24.6 LIO-SAM 10.9 224.6 9.9 24.5 34.4 表 6 RC-LIO不同模式定位偏差对比(m)

Table 6 Comparison of positioning deviations in different RC-LIO modes(m)

次数 RC-LIO (w/filter) RC-LIO (wo/filter) FAST-LIO2 1 0 0 0 2 0.024 0.026 0.051 3 0.022 0.030 0.076 4 0.052 0.055 0.088 5 0.056 0.066 0.072 6 0.045 0.053 0.117 7 0.056 0.056 0.138 8 0.037 0.038 0.127 9 0.044 0.053 0.102 10 0.025 0.024 0.136 -

[1] Cadena C, Carlone L, Carrillo H, Latif Y, Scaramuzza D, Neira J, et al. Past, present, and future of simultaneous localization and mapping: Toward the robust-perception age. IEEE Transactions on Robotics, 2016, 32(6): 1309−1332 doi: 10.1109/TRO.2016.2624754 [2] Zhang J, Singh S. LOAM: Lidar odometry and mapping in real-time. In: Proceedings of Robotics: Science and Systems. Berkeley, USA: University of California, 2014. [3] Goodin C, Carruth D, Doude M, Hudson C. Predicting the influence of rain on LIDAR in ADAS. Electronics, 2019, 8(1): Article No. 89 doi: 10.3390/electronics8010089 [4] Dreissig M, Scheuble D, Piewak F, Boedecker J. Survey on LiDAR perception in adverse weather conditions. In: Proceedings of the IEEE Intelligent Vehicles Symposium (IV). Anchorage, USA: IEEE, 2023. 1−8 [5] Park J I, Jo S, Seo H T, Park J. LiDAR denoising methods in adverse environments: A review. IEEE Sensors Journal, 2025, 25(5): 7916−7932 doi: 10.1109/JSEN.2025.3526175 [6] Jiang X Y, Xie Y, Na C N, Yu W Y, Meng Y. Algorithm for point cloud dust filtering of LiDAR for autonomous vehicles in mining area. Sustainability, 2024, 16(7): Article No. 2827 doi: 10.3390/su16072827 [7] Rusu R B, Cousins S. 3D is here: Point cloud library (PCL). In: Proceedings of the IEEE International Conference on Robotics and Automation (ICRA). Shanghai, China: IEEE, 2011. 1−4 [8] Charron N, Phillips S, Waslander S L. De-noising of lidar point clouds corrupted by snowfall. In: Proceedings of the 15th Conference on Computer and Robot Vision (CRV). Toronto, Canada: IEEE, 2018. 254−261 [9] Kurup A, Bos J. DSOR: A scalable statistical filter for removing falling snow from LiDAR point clouds in severe winter weather. arXiv preprint arXiv: 2109.07078, 2021. [10] Huang H, Yan X, Yang J, Cao Y, Zhang X. LIDSOR: A filter for removing rain and snow noise points from lidar point clouds in rainy and snowy weather. In: Proceedings of the ISPRS Geospatial Week. Cairo, Egypt: ISPRS, 2023. 733−740 [11] Yan X Y, Yang J X, Zhu X Y, Liang Y, Huang H. Denoising framework based on multiframe continuous point clouds for autonomous driving LiDAR in snowy weather. IEEE Sensors Journal, 2024, 24(7): 10515−10527 doi: 10.1109/JSEN.2024.3358341 [12] Ibrahim M R, Haworth J, Cheng T. WeatherNet: Recognising weather and visual conditions from street-level images using deep residual learning. ISPRS International Journal of GeoInformation, 2019, 8(12): Article No. 549 doi: 10.3390/ijgi8120549 [13] Seppänen A, Ojala R, Tammi K. 4DenoiseNet: Adverse weather denoising from adjacent point clouds. IEEE Robotics and Automation Letters, 2023, 8(1): 456−463 doi: 10.1109/LRA.2022.3227863 [14] Seppänen A, Ojala R, Tammi K. Self-supervised multi-echo point cloud denoising in snowfall. Pattern Recognition Letters, 2024, 185: 52−58 doi: 10.1016/j.patrec.2024.07.007 [15] Patole S M, Torlak M, Wang D, Ali M. Automotive radars: A review of signal processing techniques. IEEE Signal Processing Magazine, 2017, 34(2): 22−35 doi: 10.1109/MSP.2016.2628914 [16] Major B, Fontijne D, Ansari A, Teja Sukhavasi R, Gowaikar R, Hamilton M, et al. Vehicle detection with automotive radar using deep learning on range-azimuth-Doppler tensors. In: Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops (ICCVW). Seoul, Korea: IEEE, 2019. 924−932 [17] Zhang X Y, Wang L, Chen J, Fang C, Yang G Q, Wang Y C, et al. Dual radar: A multi-modal dataset with dual 4D radar for autononous driving. Scientific Data, 2025, 12(1): Article No. 439 doi: 10.1038/s41597-025-04698-2 [18] Xiang C, Feng C, Xie X P, Shi B T, Lu H, Lv Y S, et al. Multi-sensor fusion and cooperative perception for autonomous driving: A review. IEEE Intelligent Transportation Systems Magazine, 2023, 15(5): 36−58 doi: 10.1109/MITS.2023.3283864 [19] Fan L L, Wang J H, Chang Y M, Li Y K, Wang Y T, Cao D P. 4D mmWave radar for autonomous driving perception: A comprehensive survey. IEEE Transactions on Intelligent Vehicles, 2024, 9(4): 4606−4620 doi: 10.1109/TIV.2024.3380244 [20] 党相卫, 秦斐, 卜祥玺, 梁兴东. 一种面向智能驾驶的毫米波雷达与激光雷达融合的鲁棒感知算法. 雷达学报, 2021, 10(4): 622−631 doi: 10.12000/JR21036Dang Xiang-Wei, Qin Fei, Bu Xiang-Xi, Liang Xing-Dong. A robust perception algorithm based on a radar and LiDAR for intelligent driving. Journal of Radars, 2021, 10(4): 622−631 doi: 10.12000/JR21036 [21] Chen H Y, Zhang S, Tan K, Xu F. Fusion of LiDAR and MMW radar point clouds for environment sensing. In: Proceedings of the International Applied Computational Electromagnetics Society Symposium. Hangzhou, China: IEEE, 2023. 1−3 [22] Besl P J, McKay N D. A method for registration of 3-D shapes. IEEE Transactions on Pattern Analysis and Machine Intelligence, 1992, 14(2): 239−256 doi: 10.1109/34.121791 [23] Shan T X, Englot B, Meyers D, Wang W, Ratti C, Rus D. LIO-SAM: Tightly-coupled lidar inertial odometry via smoothing and mapping. In: Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). Las Vegas, USA: IEEE, 2020. 5135−5142 [24] Xu W, Zhang F. FAST-LIO: A fast, robust LiDAR-inertial odometry package by tightly-coupled iterated kalman filter. IEEE Robotics and Automation Letters, 2021, 6(2): 3317−3324 doi: 10.1109/LRA.2021.3064227 [25] Xu W, Cai Y X, He D J, Lin J R, Zhang F. FAST-LIO2: Fast direct LiDAR-inertial odometry. IEEE Transactions on Robotics, 2022, 38(4): 2053−2073 doi: 10.1109/TRO.2022.3141876 [26] Cai Y X, Xu W, Zhang F. ikd-Tree: An incremental K-D tree for robotic applications. arXiv preprint arXiv: 2102.10808, 2021. [27] Chen Z J, Xu Y, Yuan S H, Xie L H. iG-LIO: An incremental GICP-based tightly-coupled LiDAR-inertial odometry. IEEE Robotics and Automation Letters, 2024, 9(2): 1883−1890 doi: 10.1109/LRA.2024.3349915 [28] Wu W T, Chen C, Yang B S, Zou X H, Liang F X, Xu Y H, et al. DALI-SLAM: Degeneracy-aware LiDAR-inertial SLAM with novel distortion correction and accurate multi-constraint pose graph optimization. ISPRS Journal of Photogrammetry and Remote Sensing, 2025, 221: 92−108 doi: 10.1016/j.isprsjprs.2025.01.036 [29] Solà J. Quaternion kinematics for the error-state Kalman filter. arXiv preprint arXiv: 1711.02508, 2017. [30] Zhao S B, Gao Y J, Wu T H, Singh D, Jiang R S, Sun H X, et al. SubT-MRS dataset: Pushing SLAM towards all-weather environments. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Seattle, USA: IEEE, 2024. 22647−22657 [31] Huai J Z, Wang B L, Zhuang Y, Chen Y W, Li Q P, Han Y L. SNAIL radar: A large-scale diverse benchmark for evaluating 4D-radar-based SLAM. The International Journal of Robotics Research, 2025, 44(12): 1941−1958 doi: 10.1177/02783649251329048 [32] Segal A, Hähnel D, Thrun S. Generalized-ICP. In: Proceedings of the Robotics: Science and Systems V. Seattle, USA: The MIT Press, 2009. Article No. 435 [33] Yan G H, Liu Z C, Wang C J, Shi C L, Wei P J, Cai X Y, et al. OpenCalib: A multi-sensor calibration toolbox for autonomous driving. Software Impacts, 2022, 14: Article No. 100393 doi: 10.1016/j.simpa.2022.100393 [34] Zhu F C, Ren Y F, Zhang F. Robust real-time LiDAR-inertial initialization. In: Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). Kyoto, Japan: IEEE, 2022. 3948−3955 [35] Bos J P, Chopp D, Kurup A, Spike N. Autonomy at the end of the earth: An inclement weather autonomous driving data set. In: Proceedings of the Autonomous Systems: Sensors, Processing, and Security for Vehicles and Infrastructure. 2020. Article No. 1141507 [36] He D J, Xu W, Chen N, Kong F Z, Yuan C J, Zhang F. Point-LIO: Robust high-bandwidth light detection and ranging inertial odometry. Advanced Intelligent Systems, 2023, 5(7): Article No. 2200459 [37] Vizzo I, Guadagnino T, Mersch B, Wiesmann L, Behley J, Stachniss C. KISS-ICP: In defense of point-to-point ICP-simple, accurate, and robust registration if done the right way. IEEE Robotics and Automation Letters, 2023, 8(2): 1029−1036 doi: 10.1109/LRA.2023.3236571 [38] Chen K, Lopez B T, Agha-mohammadi A A, Mehta A. Direct LiDAR odometry: Fast localization with dense point clouds. IEEE Robotics and Automation Letters, 2022, 7(2): 2000−2007 doi: 10.1109/LRA.2022.3142739 [39] Helmberger M, Morin K, Berner B, Kumar N, Cioffi G, Scaramuzza D. The hilti SLAM challenge dataset. IEEE Robotics and Automation Letters, 2022, 7(3): 7518−7525 doi: 10.1109/LRA.2022.3183759 -

计量

- 文章访问数: 12

- HTML全文浏览量: 5

- 被引次数: 0

下载:

下载: