Semantic Segmentation of Distribution Network Point Clouds Based on a Structure Spectrum-Aware Framework

-

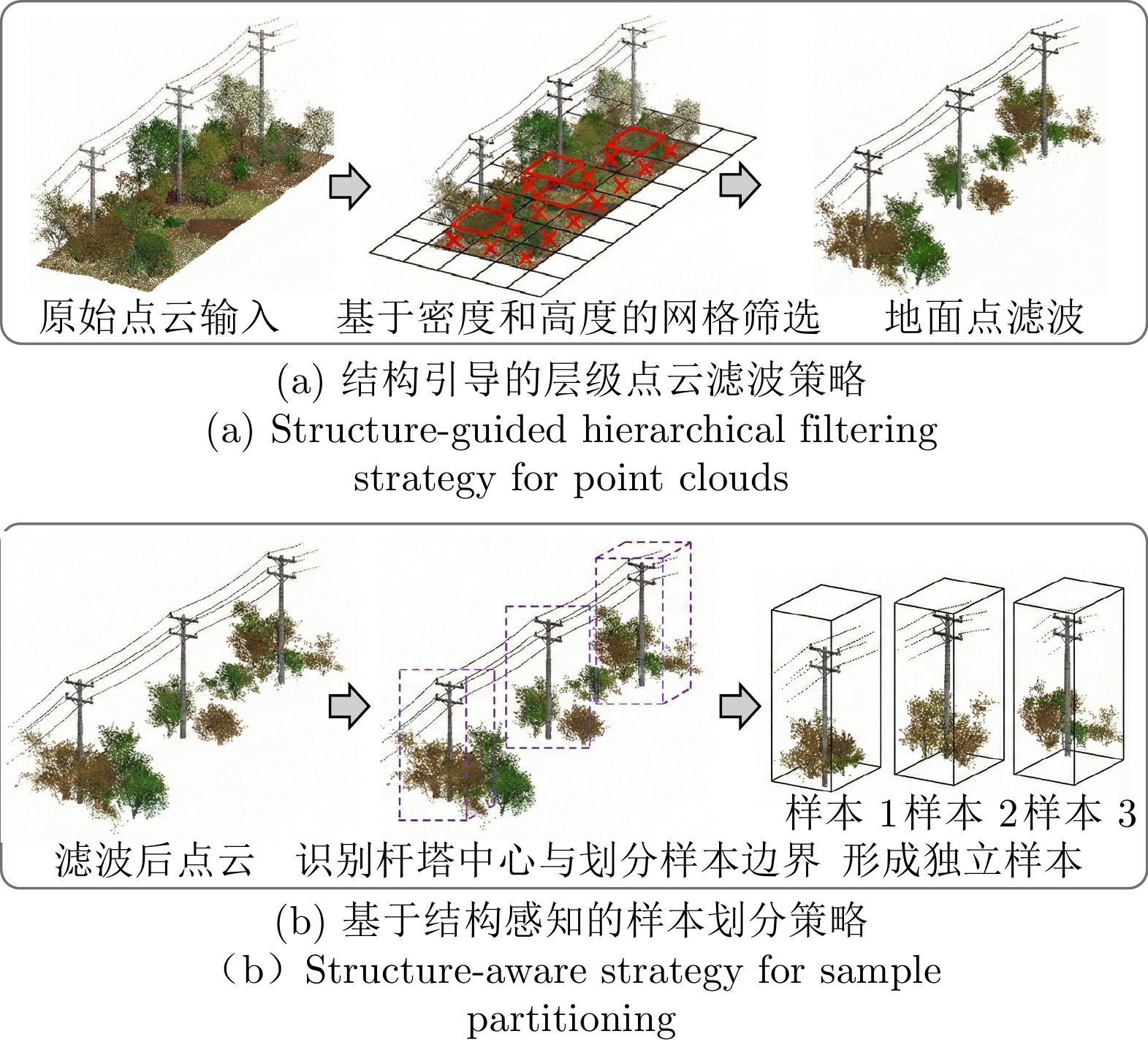

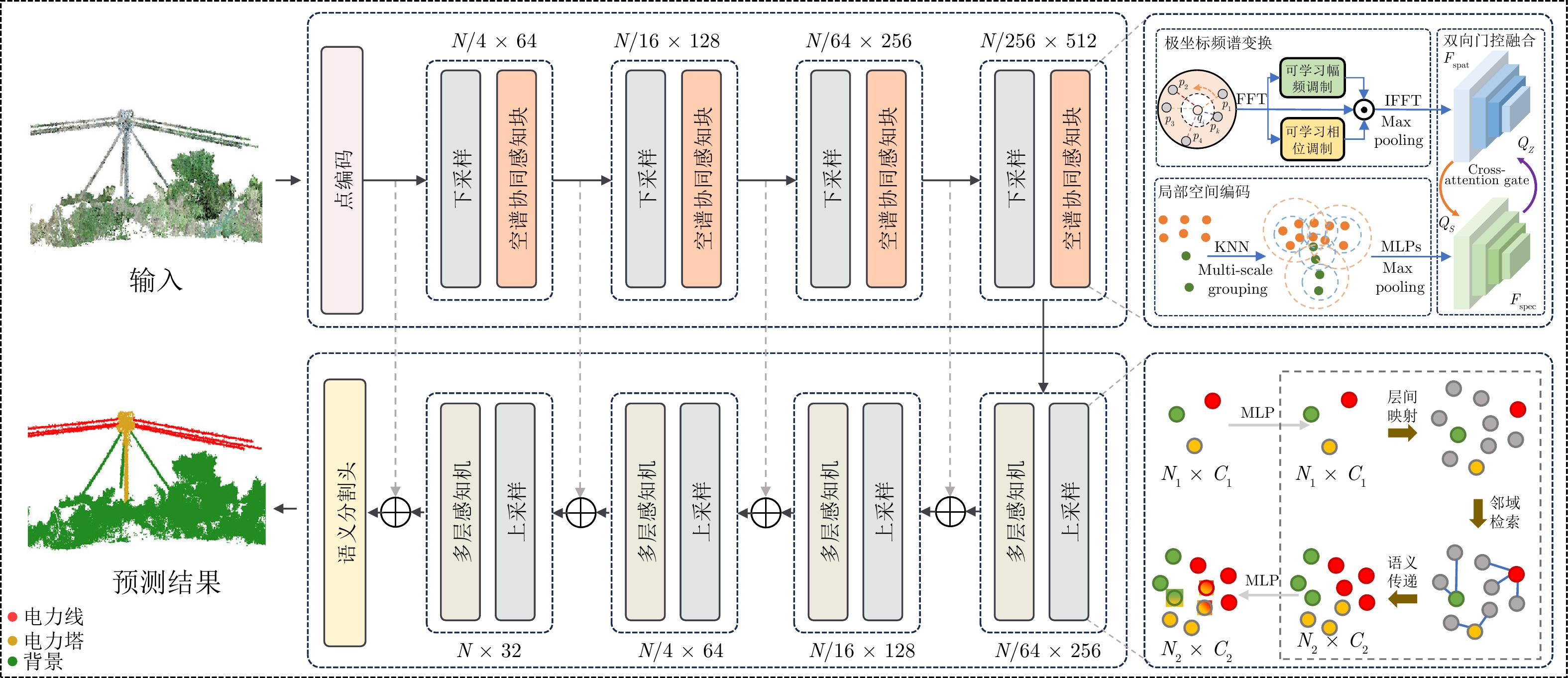

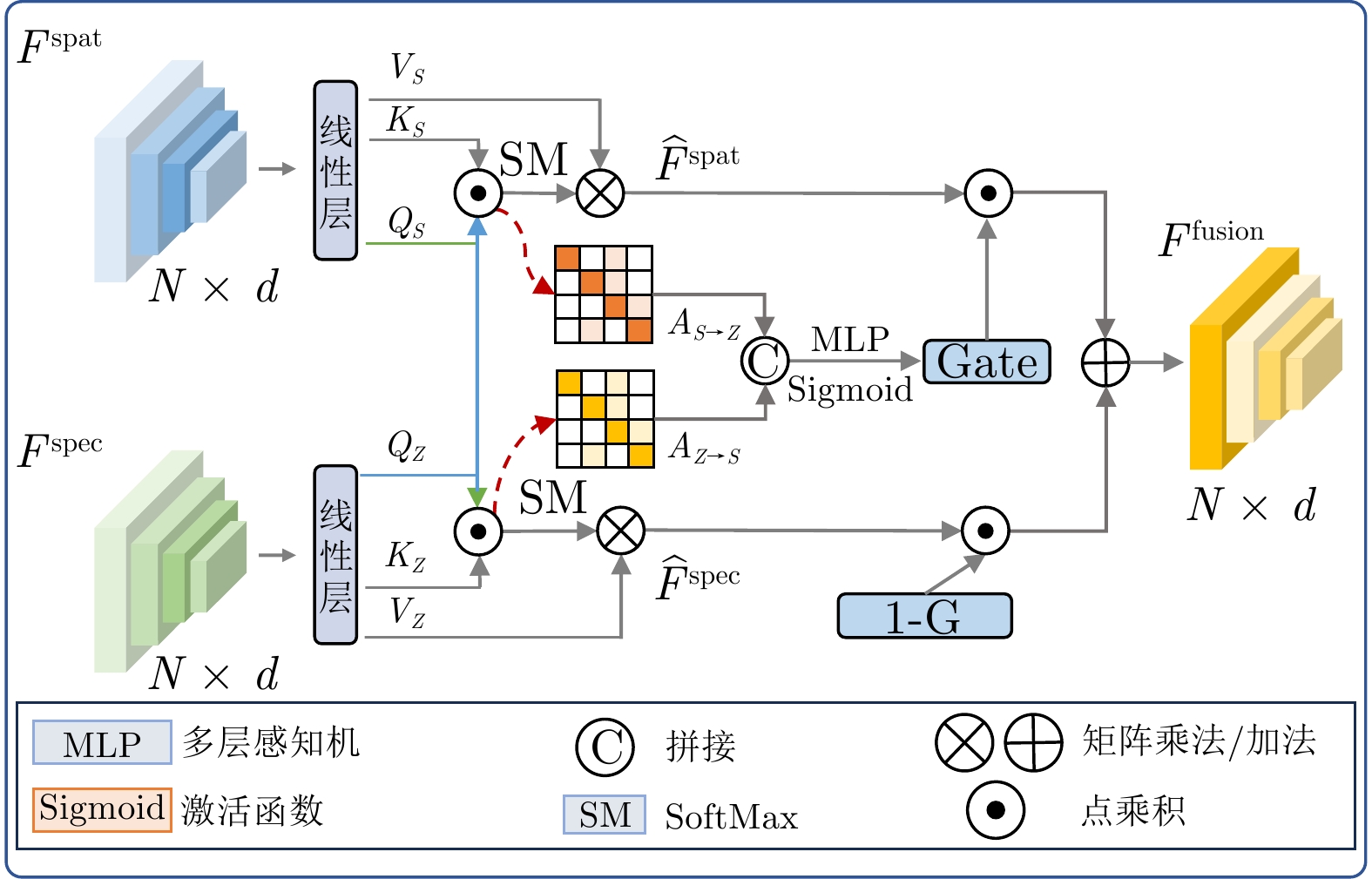

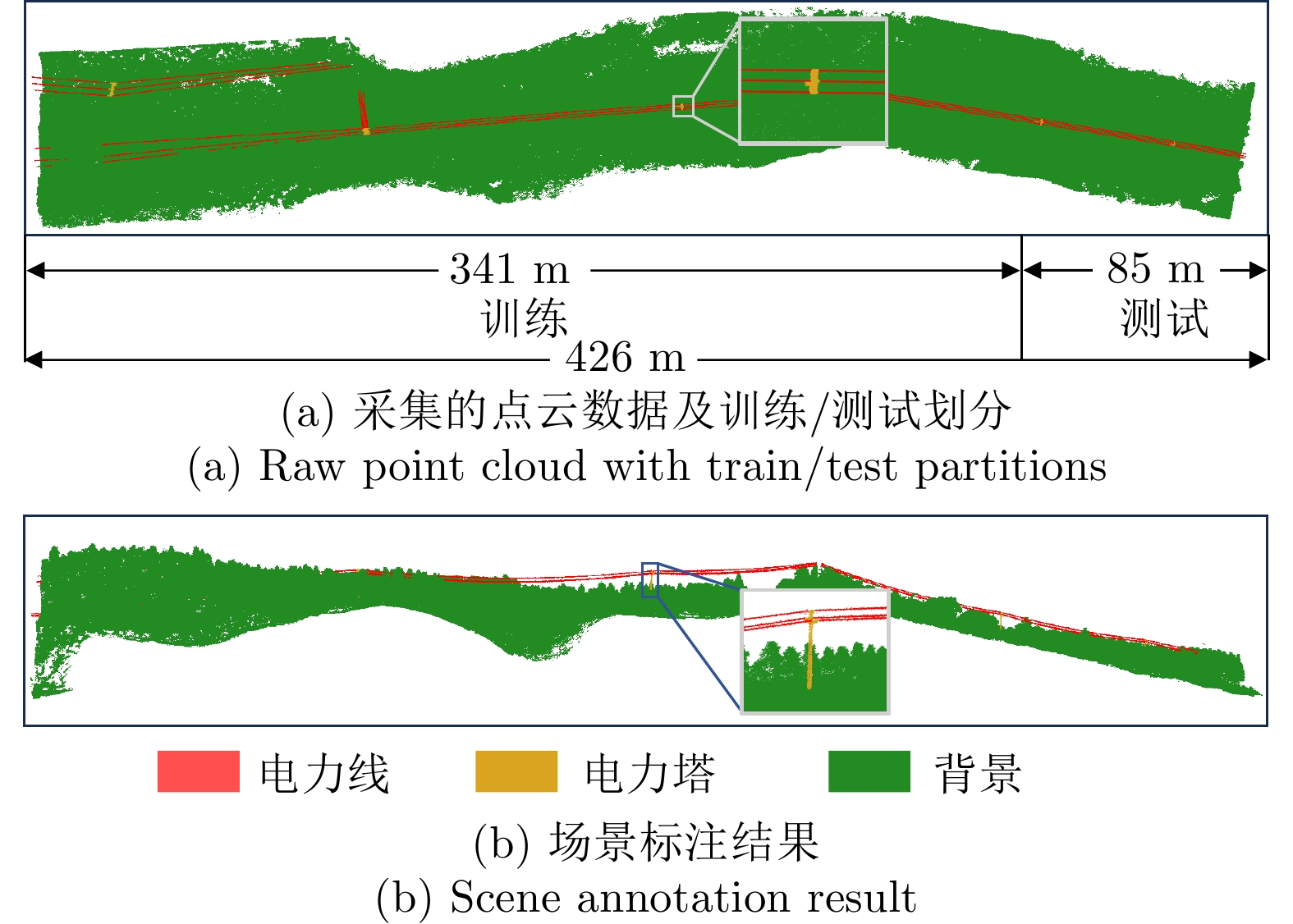

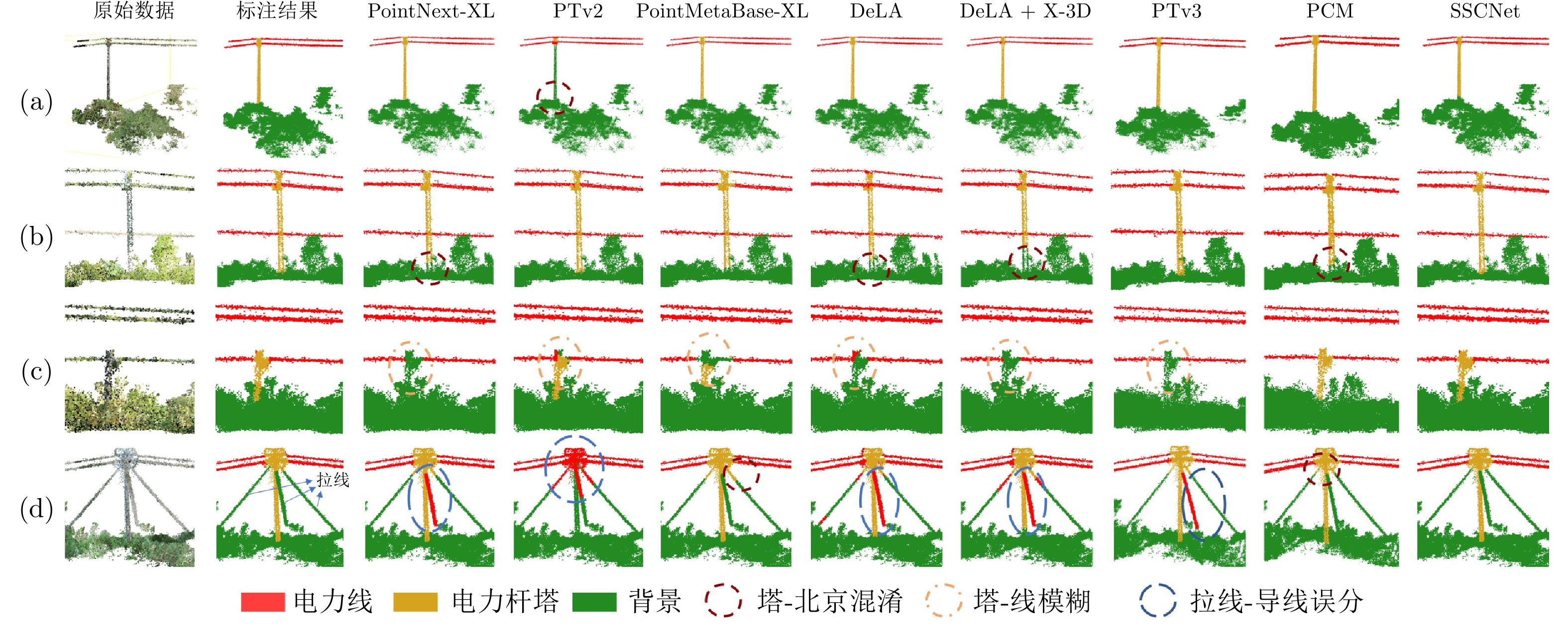

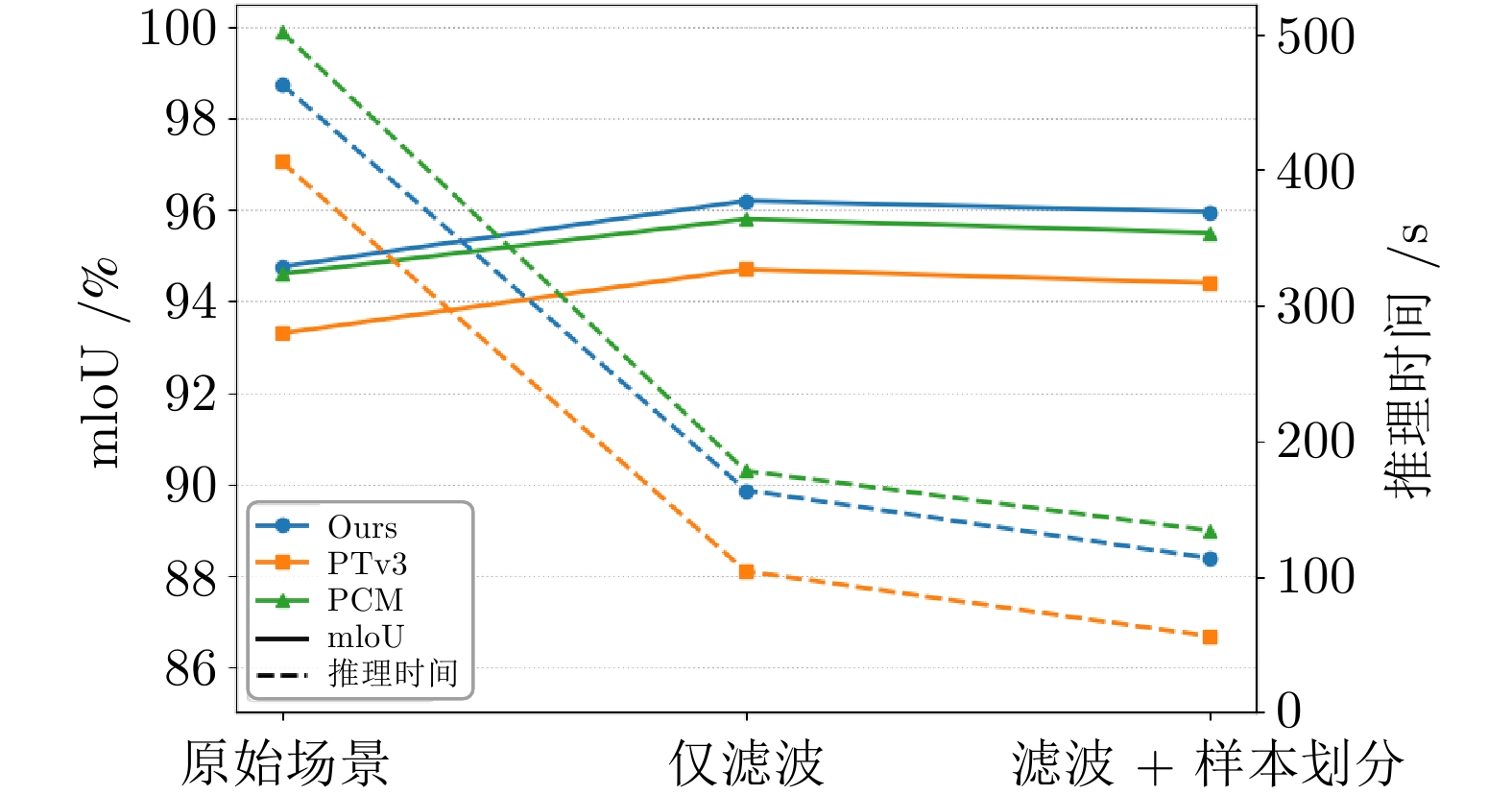

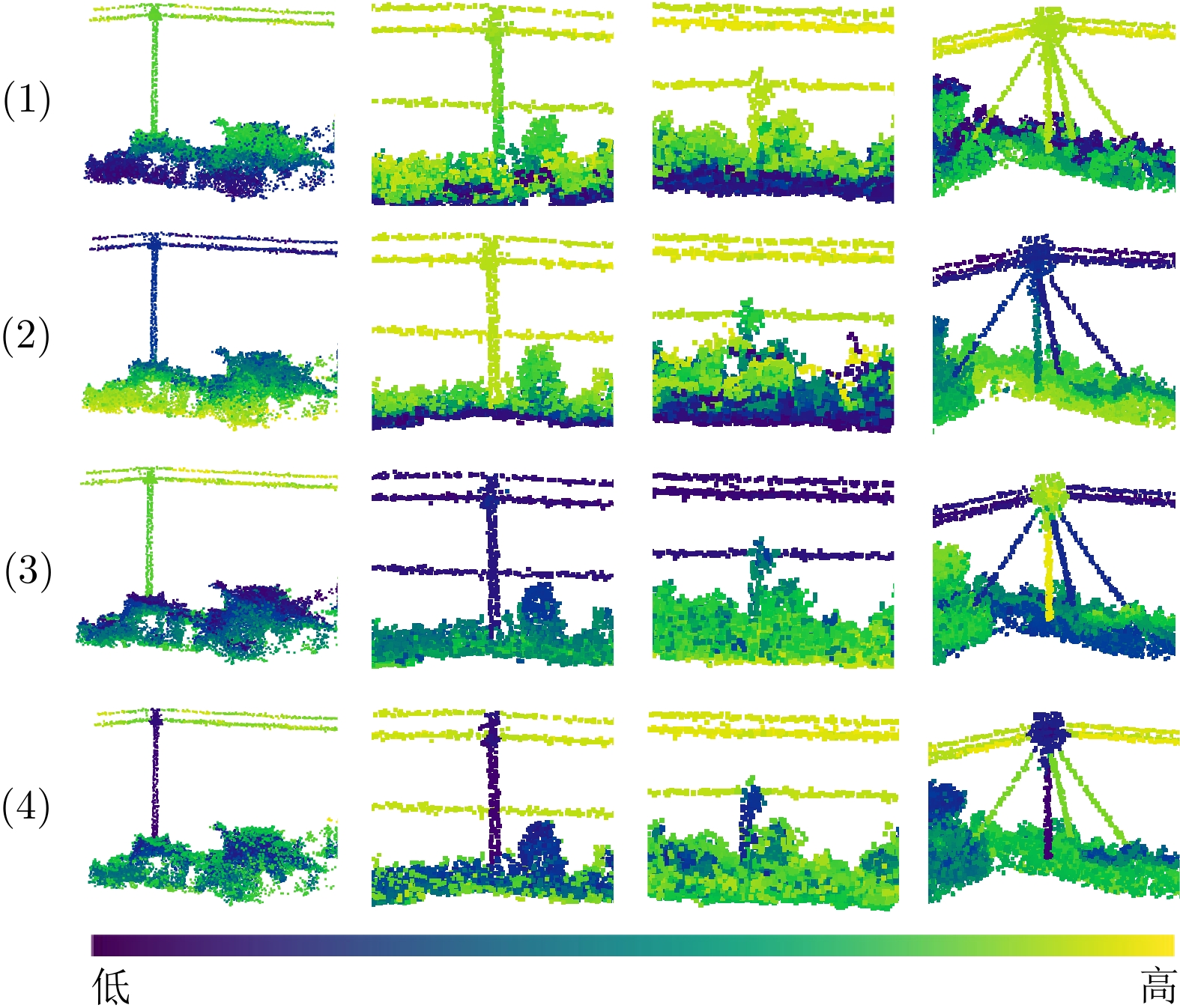

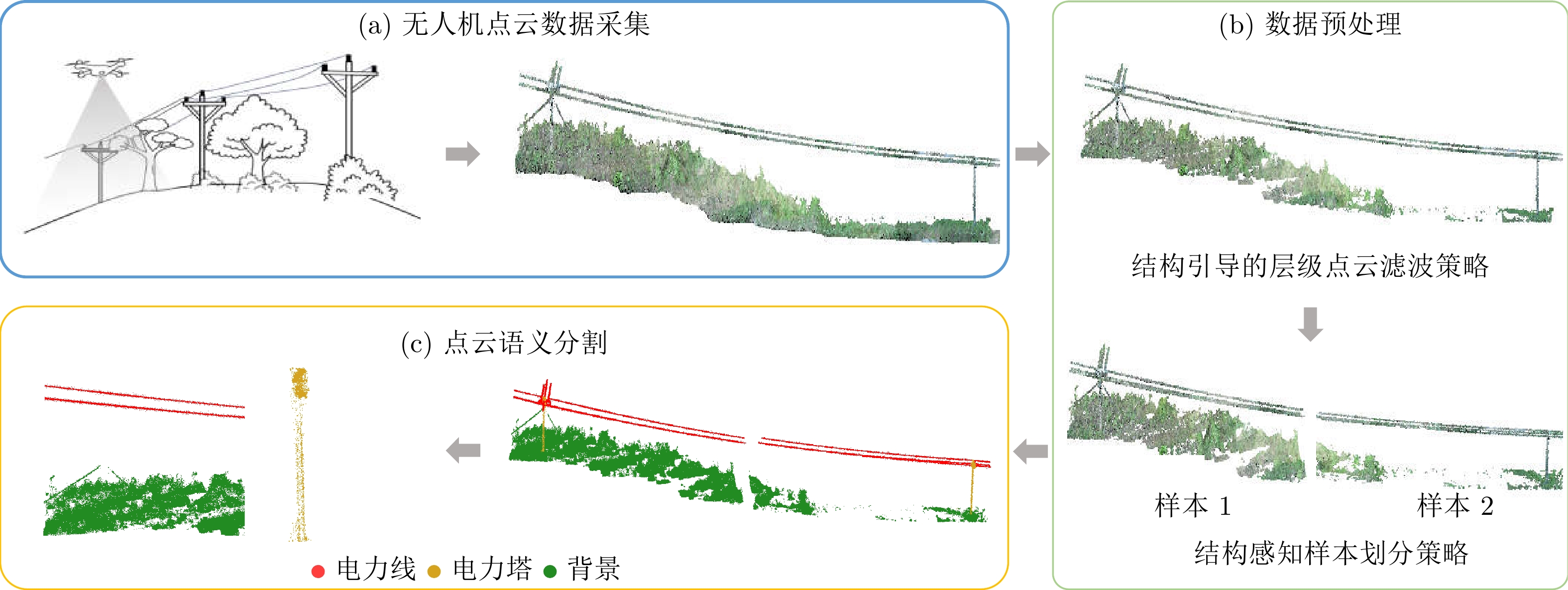

摘要: 配电网点云语义分割对于实现无人化巡检与智能电网运维具有重要意义. 尽管已有方法在空间建模与结构增强方面取得了一定进展, 但在频谱特征挖掘与大规模点云处理效率上仍面临突出挑战. 为此, 本文提出一种结构频谱感知框架(Structure Spectrum-Aware Framework, SSAF), 以提升长距离配网场景下的点云表达能力. 在数据预处理阶段, 提出了一种结合结构引导的层级点云去噪策略与结构感知的样本划分方法, 在压缩冗余背景点云的同时, 有效保持杆塔、导线等关键目标的结构完整性与连续性. 在语义分割阶段, 构建空谱协同语义分割网络(Spatial-Spectral Collaborative Semantic Segmentation Network, SSCNet), 引入局部极坐标系以增强方向敏感特征的建模能力, 并设计基于注意力图的动态融合机制, 实现空间特征与频谱特征之间的自适应交互与信息增强. 实验结果表明, SSAF能在真实配电网场景点云数据集上实现更高的分割精度与推理效率, 在多个关键指标上显著优于现有主流方法, 验证了其在复杂场景下的实用性与工程潜力.Abstract: The semantic segmentation of point clouds in power distribution networks is of great significance for enabling unmanned inspection and intelligent grid operation and maintenance. Although existing methods have made some progress in spatial modeling and structural enhancement, they still face prominent challenges in spectral feature extraction and the efficiency of large-scale point cloud processing. To address these issues, this paper proposes a Structure Spectrum-Aware Framework (SSAF) to enhance the expressive capability of point clouds in long-distance distribution network scenarios. In the data preprocessing stage, a geometry-based, structure-guided hierarchical point cloud denoising strategy and a structure-aware sample partitioning method are designed to reduce redundant background points while preserving the structural continuity of key objects such as poles and wires. During the semantic segmentation stage, a Spatial–Spectral Collaborative Semantic Segmentation Network (SSCNet) is constructed, which introduces local polar coordinates to enhance direction-sensitive feature modeling. Furthermore, a dynamic fusion mechanism based on attention maps is employed to enable dynamic interaction and information enhancement between spatial and spectral features. Experimental results show that SSAF achieves higher structural recognition accuracy and inference efficiency on real-world distribution network point cloud datasets. It significantly outperforms existing mainstream methods across multiple key metrics, demonstrating its practicality and engineering generalization potential in complex scenarios.

-

表 1 配电网场景点云语义分割实验(%)

Table 1 Experiment on Semantic Segmentation of Distribution Network Site Cloud(%)

方法 OA mAcc mIoU 背景 电力线 电力塔 Params (M) FLOPs (G) DPGCN[19] 98.26 71.29 78.92 98.20 63.10 75.46 3.60 — DGCNN[20] 98.89 78.88 83.36 99.03 62.13 88.92 1.30 — PointNet++[14] 98.40 86.04 84.75 99.62 67.48 87.14 1.00 7.20 KPConv[31] 98.90 87.91 85.51 99.90 65.71 90.91 15.00 — PointNext-XL[32] 98.27 91.40 88.54 98.65 74.72 92.25 41.60 84.80 PTv2[22] 98.36 92.63 88.42 99.28 80.04 85.93 — — PointMetaBase-XL[33] 98.38 94.21 93.62 99.11 92.35 89.40 15.30 9.20 DeLA[34] 96.87 95.34 93.65 96.89 92.69 91.36 7.00 14.00 DeLA + X-3D[35] 98.59 96.81 94.29 96.36 94.30 92.22 18.10 9.80 PTv3[23] 98.64 97.11 94.37 96.56 94.42 92.12 — — PCM[36] 98.79 97.27 95.69 96.96 96.17 93.93 34.2 — SSCNet (Ours) 98.74 97.99 96.20 97.85 94.36 96.40 12.54 20.3 表 2 各场景滤波前后分类别点数统计(单位: 万点)

Table 2 Category-wise Point Cloud Statistics Before and After Filtering (Unit: $ 10^4 $ points)

场景 原始点云 滤波后点云 背景点

滤除率 (%)电力线点 杆塔点 背景点 电力线点 杆塔点 背景点 S1 86.9 16.4 4890.0 86.1 15.7 1415.0 71.1 S2 67.9 1625.0 4042.0 65.7 1622.1 45.9 98.9 S3 117.6 1090.4 3162.0 116.8 1087.5 397.0 87.5 表 3 消融实验结果(%)

Table 3 The ablation experiment results(%)

方法 PRST BGF OA mIoU mAcc 1 98.12 94.13 91.74 2 $ \checkmark$ 98.78 95.47 93.06 3 $ \checkmark$ 98.57 95.86 96.84 4 $ \checkmark$ $ \checkmark$ 98.74 96.20 97.99 表 4 主流方法在S3DIS数据集上的对比结果

Table 4 Comparison of mainstream methods on the S3DIS dataset

方法 参数量 (M) OA (%) mAcc (%) mIoU (%) PointNet++[14] 1.0 83.0 — 53.5 DGCNN[20] 1.3 — — 47.9 KPConv[31] 15.0 — 72.8 67.1 PointNext-XL[32] 41 91.0 77.2 71.1 PTv1[37] — 90.8 76.5 70.4 PTv2[22] 11.3 91.6 78.0 72.7 PointMetaBase-XL[33] 15.3 90.6 — 71.5 DeLA[34] 7.0 92.2 80.0 74.1 DeLA+X-3D[35] 8.0 92.2 80.1 74.3 PTv3[23] 34.2 — — 74.7 PCM[36] — 92.9 81.6 74.1 SSCNet 10.2 92.3 82.1 75.1 -

[1] Shen Y, Huang J, Wang J, Jiang J, Li J, Ferreira V. A review and future directions of techniques for extracting powerlines and pylons from LiDAR point clouds. International Journal of Applied Earth Observation and Geoinformation, 2024, 132: 104056 doi: 10.1016/j.jag.2024.104056 [2] Jung J, Che E, Olsen M J, Shafer K C. Automated and efficient powerline extraction from laser scanning data using a voxel-based subsampling with hierarchical approach. ISPRS Journal of Photogrammetry and Remote Sensing, 2020, 163: 343−361 doi: 10.1016/j.isprsjprs.2020.03.018 [3] Liu X, Miao X, Jiang H, Chen J, Wu M, Chen Z. Tower masking MIM: A self-supervised pretraining method for power line inspection. IEEE Transactions on Industrial Informatics, 2023, 20(1): 513−523 [4] 王斐然, 韩庚, 郭昕阳, 石朝阳, 王金. 激光雷达数据下架空输电线路点云场景分割及净空入侵检测. 测绘通报, 2024(5): 133Wang F, Han G, Guo X, Shi Z, Wang J. Segmentation and clearance intrusion detection of overhead transmission line point cloud scene based on LiDAR data. Bulletin of Surveying and Mapping, 2024(5): 133 [5] Kim H B, Sohn G, et al. 3D classification of power-line scene from airborne laser scanning data using random forests. International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, 2010, 38(1999): 126−132 [6] Lehtomäki M, Kukko A, Matikainen L, Hyyppä J, Kaartinen H, Jaakkola A. Power line mapping technique using all-terrain mobile laser scanning. Automation in Construction, 2019, 105: 102802 doi: 10.1016/j.autcon.2019.03.023 [7] Fischler M A, Bolles R C. Random sample consensus: a paradigm for model fitting with applications to image analysis and automated cartography. Communications of the ACM, 1981, 24(6): 381−395 doi: 10.1016/b978-0-08-051581-6.50070-2 [8] Shen X, Qian C, Du Y, Yu X, Zhang R. An automatic extraction algorithm of high voltage transmission lines from airborne LIDAR point cloud data. Turkish Journal of Electrical Engineering and Computer Sciences, 2018, 26(4): 2043−2055 doi: 10.3906/elk-1801-23 [9] Zhu S, et al. A deep-learning-based method for extracting an arbitrary number of individual power lines from uav-mounted laser scanning point clouds. Remote Sensing, 2024, 16(2): 393 doi: 10.3390/rs16020393 [10] Maturana D, Scherer S. Voxnet: A 3d convolutional neural network for real-time object recognition//2015 IEEE/RSJ International Conference on Intelligent Robots and Systems. Hamburg, Germany: Institute of Electrical, 2015: 922-928. [11] 单铉洋, 孙战里, 曾志刚. RFNet: 用于三维点云分类的卷积神经网络. 自动化学报, 2023, 49(11): 2350−2359Shan X, Sun Z, Zeng Z. RFNet: Convolutional neural network for 3D point cloud classification. Acta Automatica Sinica, 2023, 49(11): 2350−2359 [12] 鲁斌, 范晓明. 基于改进自适应k均值聚类的三维点云骨架提取的研究. 自动化学报, 2022, 48(8): 1994−2006 doi: 10.16383/j.aas.c200284Lu B, Fan X. Research on 3D point cloud skeleton extraction based on improved adaptive k-means clustering. Acta Automatica Sinica, 2022, 48(8): 1994−2006 doi: 10.16383/j.aas.c200284 [13] Qi C R, Su H, Mo K, Guibas L J. Pointnet: Deep learning on point sets for 3d classification and segmentation//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Honolulu, USA: IEEE, 2017: 652-660. [14] Qi C R, Yi L, Su H, Guibas L J. Pointnet++: Deep hierarchical feature learning on point sets in a metric space. Advances in Neural Information Processing Systems, 201730 [15] Dong J, Chen H, Chen S, Zhao Y, Yang N. PSFE-Net: Semantic segmentation network for airborne LiDAR transmission corridor scenes inspection//2024 9th Asia Conference on Power and Electrical Engineering. Beijing, China: IEEE, 2024: 1538-1542. [16] Liu X, Shuang F, Li Y, Zhang L, Huang X, Qin J. SS-IPLE: Semantic segmentation of electric power corridor scene and individual power line extraction from UAV-based lidar point cloud. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2023, 16: 38−50 doi: 10.1109/JSTARS.2023.3289599 [17] 黄郑, 顾徐, 王红星, 张星炜, 张欣. 基于改进PointNet++ 的输电杆塔点云语义分割模型. Electric Power, 2023, 56(3)Huang Z, Gu X, Wang H, Zhang X, Zhang X. An improved PointNet++ model for semantic segmentation of transmission tower point clouds. Electric Power, 2023, 56(3 [18] Li W, Luo Z, Xiao Z, Chen Y, Wang C, Li J. A GCN-based method for extracting power lines and pylons from airborne LiDAR data. IEEE Transactions on Geoscience and Remote Sensing, 2021, 60: 1−14 doi: 10.1109/tgrs.2021.3076107 [19] Li G, Muller M, Thabet A, Ghanem B. DeepGCNs: Can GCNs go as deep as CNNs?//Proceedings of the IEEE/CVF International Conference on Computer Vision. Seoul, South Korea: IEEE, 2019: 9267-9276. [20] Wang Y, Sun Y, Liu Z, Sarma S E, Bronstein M M, Solomon J M. Dynamic graph CNN for learning on point clouds. ACM Transactions on Graphics, 2019, 38(5): 1−12 [21] 李建, 王健, 王雷, 李敏, 杨立克, 赵艺龙. 双重注意力机制的电力走廊点云语义分割. 测绘通报, 2023(4): 127Li J, Wang J, Wang L, Li M, Yang L, Zhao Y. Dual-attention mechanism for semantic segmentation of power corridor point clouds. Bulletin of Surveying and Mapping, 2023(4): 127 [22] Wu X, Lao Y, Jiang L, Liu X, Zhao H. Point transformer v2: Grouped vector attention and partition-based pooling. Advances in Neural Information Processing Systems, 2022, 35: 33330−33342 doi: 10.52202/068431-2415 [23] Wu X, et al. Point transformer v3: Simpler faster stronger//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle, USA: IEEE, 2024: 4840-4851. [24] Bu L, Wang Y, Ma Q, Hou Z, Wang R, Bu F. Deep hierarchical learning on point clouds in feature space. Neurocomputing, 2025, 630: 129647 doi: 10.1016/j.neucom.2025.129647 [25] Liu D, Hu W, Li X. Point cloud attacks in graph spectral domain: When 3d geometry meets graph signal processing. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2023, 46(5): 3079−3095 doi: 10.1109/tpami.2023.3339130 [26] Wen C, Long J, Yu B, Tao D. PointWavelet: Learning in Spectral Domain for 3-D Point Cloud Analysis. IEEE Transactions on Neural Networks and Learning Systems, 2025, 36(3): 4400−4412 doi: 10.1109/TNNLS.2024.3363244 [27] Rizaldy A, Gloaguen R, Fassnacht F E, Ghamisi P. HyperPointFormer: Multimodal Fusion in 3-D Space With Dual-Branch Cross-Attention Transformers. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2025, 18: 21254−21274 doi: 10.1109/JSTARS.2025.3595648 [28] Liang D, Feng T, Zhou X, Zhang Y, Zou Z, Bai X. Parameter-efficient fine-tuning in spectral domain for point cloud learning. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2025. [29] Yang Y, et al. RALoc: Enhancing Outdoor LiDAR Localization via Rotation Awareness//Proceedings of the IEEE/CVF International Conference on Computer Vision. 2025: 3304-3313. [30] Zhang W, et al. An easy-to-use airborne LiDAR data filtering method based on cloth simulation. Remote sensing, 2016, 8(6): 501 doi: 10.3390/rs8060501 [31] Thomas H, Qi C R, Deschaud J E, Marcotegui B, Goulette F, Guibas L J. Kpconv: Flexible and deformable convolution for point clouds//Proceedings of the IEEE/CVF International Conference on Computer Vision. Seoul, South Korea: IEEE, 2019: 6411-6420. [32] Qian G, et al. Pointnext: Revisiting pointnet++ with improved training and scaling strategies. Advances in Neural Information Processing Systems, 2022, 35: 23192−23204 doi: 10.52202/068431-1685 [33] Lin H, et al. Meta architecture for point cloud analysis//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Vancouver, Canada: IEEE, 2023: 17682-17691. [34] Yang W, et al. DeLA: An extremely faster network with decoupled local aggregation for large scale point cloud learning. International Journal of Applied Earth Observation and Geoinformation, 2024, 135: 104255 doi: 10.1016/j.jag.2024.104255 [35] Sun S, Rao Y, Lu J, Yan H. X-3D: Explicit 3D structure modeling for point cloud recognition//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle, USA: IEEE, 2024: 5074-5083. [36] Zhang T, et al. Point cloud mamba: Point cloud learning via state space model//Proceedings of the AAAI Conference on Artificial Intelligence: vol. 39: 10. 2025: 10121-10130. [37] Zhao H, Jiang L, Jia J, Torr P H S, Koltun V. Point transformer//Proceedings of the IEEE/CVF International Conference on Computer Vision. Montreal, Canada: IEEE, 2021: 16259-16268. -

计量

- 文章访问数: 167

- HTML全文浏览量: 109

- 被引次数: 0

下载:

下载: